This article is part of the Developer Productivity Engineering Camp blog series, brought to you by Tomoya Tabuchi (@tomoyat1) from the CI/CD team.

Introduction

Today, we will go over our recent efforts on a replacement for our continuous delivery (CD) system. We will discuss the background and approach for replacing our current CD system, then introduce the OSS projects we chose to use. We will also discuss the designs behind access control suitable for our multi-tenant environment, as well as the abstractions on top of the OSS projects for developers’ ease of use. We will conclude by showing work that is yet to be done as we provide the new platform features to our developers.

Background

Since very early in Microservices Platform at Mercari, we have used Spinnaker as our CD platform. It has provided us with a powerful pipeline feature to build on our workflows for deploying our microservices to Kubernetes, and authn/authz to put in access control so that only microservice owners have access to their deployment workloads.

However, as the number of microservices grew, Spinnaker became increasingly difficult to operate. There are performance issues that result in our developers’ requests to edit or execute pipelines failing. We spent much time trying to improve performance, but we haven’t had enough success. We decided it was time to start considering alternatives.

Planning for the replacement

Spinnaker is a large tool packed with features, and many use cases exist internally for those features. After our initial investigation of existing solutions, we came to realize that choosing a single alternative product and replacing Spinnaker with it would not be enough. So, we made the observation that if the following “functions” are available then we can support most of the internal use cases.

- Reconciliation: applying Kubernetes manifests in Git to our clusters

- Workflows: a way to run a sequence of steps in a deployment

- Canary release: a way to gradually shift traffic from old to new Deployments to detect errors; this may or may not be implemented on top of Workflows.

- Trigger: Automatically triggering some or all of the above when there is a Docker image or manifest update

To start things off, we decided to focus on reconciliation and trigger for the first phase of our new CD system project. After much consideration, we decided to use two OSS projects: Argo CD and Flux. Argo CD is for reconciliation and trigger on manifest changes, and the automated image updates feature of Flux CD is for trigger on Docker image changes by turning image updates to manifest updates.

Argo CD

Argo CD is an open-source GitOps based continuous delivery system. In essence, it takes Kubernetes manifests from Git repositories and applies them to the targeted Kubernetes cluster. We chose it over other similar projects for its RBAC features and well-polished UI.

Key configuration elements for Argo CD are:

- Repositories: Credentials for a Git repository with source Kubernetes manifests

- Clusters: Deploy destination cluster, possibly with allowed namespace list and Kubernetes credential providers

- Applications: a configuration for deploying a set of manifests to a target cluster

- AppProjects: a logical collection of Applications with the same policies (RBAC, allowed destination clusters and source repositories)

In our case, the repository is our monorepo containing Kubernetes manifests, and a cluster is configured for each Kubernetes namespace (more on why such fine splitting later) An AppProject is created for each microservice, and an Application is used for a single set of manifests that should be deployed at once (for example, a Kustomize overlay)

RBAC and multi-tenant clusters

Our Kubernetes clusters are multi-tenant, meaning that a given cluster could be shared by many microservices within the organization, with each microservice getting their own namespace. Also, our Argo CD installation will also be multi-tenant. This means that we need to have access control over who can deploy to which namespaces through Argo CD. Our restrictions consist of two parts.

Argo CD RBAC is used to control which users can trigger a sync for a microservice’s Application. We have configured our Argo CD installation to do user authentication through GitHub OAuth2. This, in combination with the GitHub teams that our microservices-starter-kit creates for each microservice, we can restrict access to Applications based on whether the user belongs to the correct GitHub team. We have Applications that belong to the AppProjects created for the microservice, and enforce RBAC on each AppProject.

However, there is still nothing stopping someone, or more likely a buggy abstraction, from creating an Application and deploying it to a namespace that the owner doesn’t own!

To solve this problem, we decided to use separate cluster configurations for each microservice/namespace along with GCP service account impersonation. This is very similar to the service account impersonation used in our secure terraform monorepo CI.

We have a GCP service account for each microservice. This service account is given access to only the namespace belonging to the microservice. Meanwhile Argo CD runs as a separate GCP service account that only has permissions to impersonate one of the microservice service accounts. We have separate cluster configurations for each namespace, with the setup needed to pass the impersonated GCP credentials to the client-go in Argo CD at each sync operation. We will discuss the setup next.

The cluster configuration allows the Argo CD admin to configure how the Kubernetes credentials are obtained through client-go credential plugins. One of the things that the admin can do is to specify a command to be run when Kubernetes requires credentials. The command is responsible for printing out the credentials, which the client code can capture and use for further authentication. For the command in question there is the gcloud config config-helper --impersonate-service-account= command, which will output credentials in a format only recognized by the kubectl. So, we wrote a shim around the command which reformats the output required by the credential plugin interface. By putting this all together, we get the following code.

# Cluster configiguration Secret

apiVersion: v1

Kind: Secret

Metadata

name: our-cluster.microservice-a

stringData: |

{

"tlsClientConfig" : { <snip> },

"execProviderConfig" : {

"command" : "/usr/local/bin/shim.sh",

"args" : [

"microservice-a@proj-with-sa.iam.gserviceaccount.com"

],

"apiVersion" : "client.authentication.k8s.io/v1beta1"

}

}#!/bin/bash

# The shim script

main() {

filter=$(cat <<EOT

{

"apiVersion": "client.authentication.k8s.io/v1beta1",

"kind": "ExecCredential",

"status": {

expirationTimestamp: .credential.token_expiry,

token: .credential.access_token

}

}

EOT

)

gcloud config config-helper --impersonate-service-account="${1}" --format=json | jq "${filter}"

}

main "$@"The above lets Argo CD impersonate the service account for microservice-a when an Application uses our-cluster.microservice-a as the destination. The shim and gcloud are added to our internal version of the Argo CD Docker image. Also, AppProjects are used to restrict which clusters can be targeted by which Applications, so any Application owned by a microservice will use the correct cluster configuration.

Flux

While Argo CD solved many of our problems, it does not have the ability to update the Kubernetes manifests when a new container image is pushed. Luckily Flux comes with a feature to automate updating manifests when a new tag is pushed to a Docker registry.

We chose to introduce the workflow of writing and updating image tags in the Kubernetes manifests themselves for easier disaster recovery, as opposed to triggering the reconciliation by other means and having the CD system fill the tags in when applying (as in Spinnaker)

Automated image updates are configured through three CRDs:

ImageRepository: configuration for Docker registriesImagePolicy: configures the rules to determine which Docker image tag is the latestImageUpdateAutomation: configures the process of updating a manifest file in a repository

For our use, ImageRepository will be configured for our monorepo with Kubernetes manifests, and ImagePolicy and ImageUpdateAutomation will be configured for each workload (Deployment etc) in each microservice.

Apart from this, a special comment needs to be placed in the manifest where the image to be rewritten is referenced. This comment specifies which ImagePolicy (the rules for determining the “latest” image) should be applied when updating that manifest. Both container image fields and Kustomize images fields are supported.

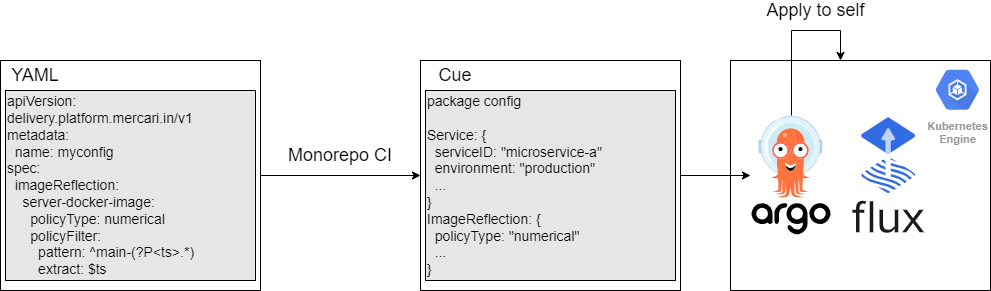

Additionally, to support Kubernetes Kit (k8s-kit), the recent internal efforts on providing an abstraction for Kubernetes manifests in the Cue language, we have an internal fork for the underlying Kubernetes controller so that it supports rewriting Docker image references in Cue files as well.

Abstraction and integration with our internal ecosystem

Configuring all of the above through the CRDs requires both extensive knowledge of each of the parts, as well as permission to update details that we don’t want all users to touch. To solve this we have an abstraction layer that generates and applies the CRDs from minimal input from the user.

Our microservices-starter-kit generates the AppProject and cluster configurations for microservices with a one line change to the Terraform module inputs. To the developer this looks like enabling the platform feature for their microservice.

The rest of the CRDs are needed for each workload, and are configured through YAML files or k8s-kit.This is converted to Cue code by the monorepo CI scripts and is combined with the abstraction layer also written in Cue (this is separate from k8s-kit) to generate the CRDs. The resulting set of Cue files is synced to the cluster where the CD components run on, by Argo CD itself through the use of a purpose built Argo CD Plugin. This configures Argo CD and Flux to do their jobs as described in the previous two sections.

The mechanism described above allows our developers to use the CD system with minimal knowledge and configuration of the underlying components.

Future work

Our idea for the new CD system is still in its early stages. Our next goal is to release the new system internally to a small group of early adopters to gather feedback on the idea, so that we can further improve upon the features and developer experience.

Moreover, the first phase of the project described above does not support expressing complex pipelines or doing canary releases. We plan to work towards adding these features into the abstraction at a later date.

Conclusion

In this post we discussed our recent work on a replacement for our CD system. We went through our reasoning behind and the approach towards replacing Spinnaker, then introduced the OSS projects, Argo CD and Flux, that we decided to use. We have covered our ways of achieving strict access control for editing and executing deployments in a multi-tenant environment, as well as our abstraction over the components for better developer experience. We wish to start delivering this platform feature soon, and we have much more catching up to do in terms of supported features.

We are hiring! Especially, if you are passionate about providing CI/CD and other platforms to developers to assist and improve their productivity, please consider applying for these positions..