This article is a part of Developer Productivity Engineering Camp blog series, brought to you by Daisuke FUJITA (@dtan4) from the Platform Infra Team.

At Mercari, one of the core platform tenets is to manage all cloud infrastructure in declarative configurations. Our main cloud provider is Google Cloud Platform (GCP) and we use HashiCorp Terraform to manage infrastructure as code. The Platform Infra team provides an in-house CI service to manage all terraform workflows securely.

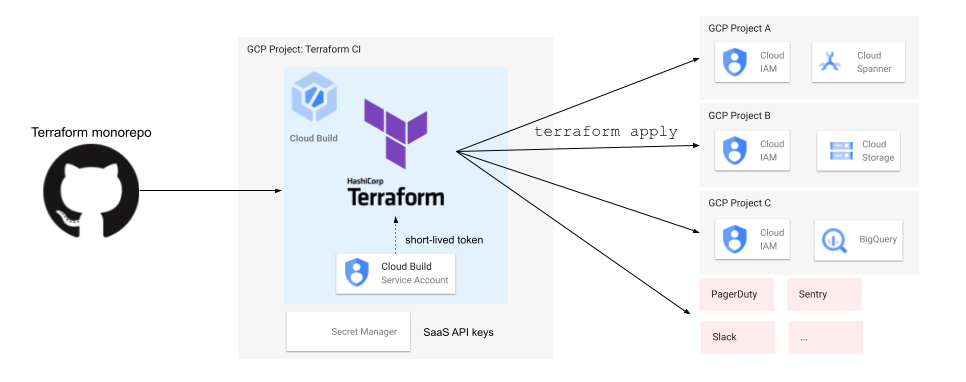

Terraform requires cloud providers’ credentials for resource provisioning. To keep the system simple, we started storing these credentials as environment variables, but as the terraform usage started increasing, the blast radius of these credentials grew as well.

In this article, I’m going to explain the security problems we faced in our Terraform environment, and how we improved the situation.

Background

Terraform monorepo

To have a centralized control over all infrastructure resources, one of the early decisions we made was to store all company-wide Terraform configurations in a centralized monorepo. Some key facts about this repo:

- Used by all companies within Mercari Group (Mercari JP, Mercari US, Merpay, Souzoh, etc)

- Managing 500+ services across all companies and 1,000+ Terraform states (tfstate)

- Each service has its own dedicated folder and service owners, who actually develop and manage the services, are the CODEOWNER of this folder ensuring only they can approve changes

The following diagram shows the directory structure of this Terraform monorepo. Each service has its own directory, and it also has their environment’s directories, which actually contain Terraform configurations in each service’s GCP project.

├── script

├── terraform

│ ├── microservices

│ │ ├── <SERVICE_ID>

│ │ │ ├── development # manages resource in GCP project in `<SERVICE_ID>-dev`

│ │ │ └── production # manages resource in GCP project in `<SERVICE_ID>-prod`Our initial CI implementation was based on CircleCI. When new commits are pushed on the feature branch, CircleCI executes the terraform plan and publishes the results in pull request comment. CI scripts ensure that Terraform runs only for the folders (services) where files have been changed (create/update/delete). Once the code changes are approved by the CODEOWNERS, pull requests can be merged and CircleCI executes terraform apply on merge.

To access cloud providers, we stored provider’s credentials such as GCP service account key and SaaS API key, in CircleCI project’s environment variables.

Problems

We have used this system for years, but there were some problems from a security perspective.

Permanent service account key

To manage GCP resources, Terraform requires GCP credentials. One way to do this is by providing GCP service account keys. To keep things simple, initially our Terraform provider was using a static credential of a GCP service account and this credential does not have any expiry. If this is leaked, a bad actor can do anything until we revoke it manually.

Single and very strong service account

We used only one GCP service account to run Terraform for multiple GCP projects. As a result, the service account had too broad permissions in our GCP organization, in other words, this service account had the Project Owner role (roles/owner) in every GCP project. It means once the bad actor gets the access to this service account, they can do anything in our GCP organization, even break our production environment.

This architecture also technically allowed users to create resources in different GCP projects, without approvals by the project owner. We assume that each service’s directory manages resources only in their GCP project, but since all services share the same Terraform service account, they can still specify the other project ID then create the resource there without the project owner’s approval, because the file’s CODEOWNER is not the owners. We implemented a lint script to check the project field, but we wanted to prevent this by system.

# terraform/microservices/mercari-xxx-jp/production/google_storage_bucket.tf

resource "google_storage_bucket" "test-bucket" {

# We expected this value should be "mercari-xxx-jp-prod"

# but Terraform could still create this resource

project = "mercari-yyy-jp-prod"

name = "test-bucket"

location = "US"

}Risk of arbitrary command execution

CI pipeline configuration file (for CircleCI, .circleci/config.yml) was located on the same repository as Terraform monorepo. The file was protected by CODEOWNERS to require reviews by repository administrators to merge the change to the main branch, but it didn’t prevent editing it on the feature branch by non-administrators. For example, the person who has write access to this repository could edit the CI pipeline to run arbitrary commands on CircleCI, then execute it on their branch, without administrators’ approvals.

Aside from this, there was another possible scenario of arbitrary command execution through Terraform providers. For example, External Provider allows us to execute an arbitrary command through Terraform configuration.

Secure Terraform CI

To solve these problems, we re-designed our Terraform CI and its permissions from scratch. I’m going to explain the key points of the new design.

Keyless architecture using Cloud Build

To get rid of the permanent service account key, we have decided to migrate CI platform from CircleCI to Cloud Build.

Each GCP project has its own Cloud Build service account, and the Cloud Build job can authenticate as the service account through temporary credentials issued in each build. We no longer need to create permanent service account keys and store them inside CI.

For other credentials such as SaaS API keys, we are using Secret Manager to store them.

(By the way, GitHub announced OpenID Connect (OIDC) support in GitHub Actions few months ago (announcement), which allows us to connect cloud providers such as AWS/GCP without creating and storing permanent keys in GitHub Actions. If you’re planning to build a new keyless CI now, this will also be a good option.)

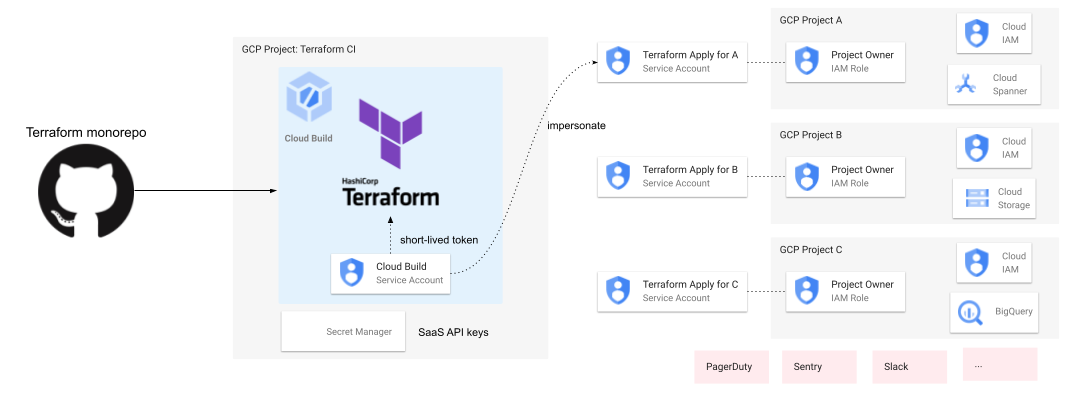

Service Account per service

To solve the second problem, we have decided to separate Terraform’s service account by service and its environment, which has the least privileges.

Each service has its own service accounts for Terraform: “Terraform Plan” service account which has read-only permissions in the service project, and “Terraform Apply” service account which has the Project Owner role in the service project. As you can see from its name, when CI executes terraform plan, it uses the “Terraform Plan” service account of the target project, because it just reads data from GCP. For terraform apply, it uses the “Terraform Apply” service account.

GCP supports the “service account impersonation” feature, which allows a service account or user account to impersonate another service account to call GCP APIs using the impersonated service account’s privilege. Internally, this is done by creating short-lived credentials of target service accounts.

When CI runs a new job, Terraform (authenticated as Cloud Build service account) impersonates Terraform service account for target project to be updated. For example, when Terraform configurations in project A changed, Terraform impersonates the service account “Terraform Plan/Apply for Project A”. Since each service account has privileges effective only in the service’s project, it prevents creating resources in different projects by system: terraform apply will fail by permission error.

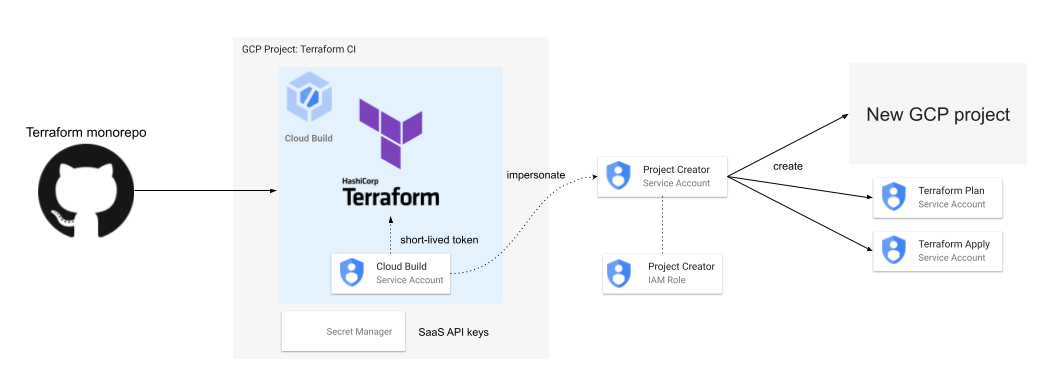

But how do we create these service accounts with appropriate permissions when we create a new GCP project? To create a new GCP project, we use another dedicated service account which has the Project Creator role in the organization. Once the project is created, the project creator, in this case the service account, has the Project Owner role in the project by default. CI creates the per-service Terraform service accounts and grants the Project Owner/Viewer roles to the accounts using this temporary Project Owner role.

However, if we don’t revoke the Project Owner role from the project creator service account, it will be a Project Owner in all new projects, which leads to another “single and very strong service account”. To prevent this situation, CI revokes the permission automatically once the initial project setup is done. From the next time, the newly created per-service Terraform service accounts will be used by CI.

CI scripts managed outside monorepo

To solve the third problem, we have decided to manage CI pipeline configurations outside the Terraform monorepo.

As well as other CI services, we also need to prepare a build configuration file to describe the CI pipeline. In the new secure CI, we don’t put it in the Terraform monorepo. Instead, we manage it in another dedicated repository where only platform administrators have write access. When we make changes in the configuration file, it’ll be uploaded to a dedicated Cloud Storage (GCS) bucket. When a new commit is pushed to Terraform monorepo, CI trigger fetches the build configuration from the GCS bucket, and creates a new Cloud Build job.

One drawback is that if we store Cloud Build configurations outside the source code repository, we cannot use Cloud Build’s managed build triggers, including Cloud Build GitHub App. We implemented CI triggers and build status notifiers using Cloud Function.

Pre-approved Terraform providers

To prevent users from using Terraform providers which behave improperly or can do arbitrary command execution, we have decided to install Terraform plugin binaries approved by administrators to the base CI image beforehand, then let all Terraform processes use these pre-installed plugins only.

terraform init command has -plugin-dir=PATH option, which initializes the current directory with the plugin binaries placed in the given directory only without downloading plugin binaries from the plugin registry. When the current directory contains Terraform configuration to use an unapproved provider, terraform init -plugin-dir=PATH will fail and prevent further execution.

Migration

As this repository manages resources for hundreds of services, it was almost impossible to migrate the CI system to a new one at the same time. Some services might have Terraform configurations for different GCP projects which will not be supported in the new CI, or have “configuration drift” which is the difference between Terraform configurations and actual infrastructure state made by manual operations. We need to resolve them before migration. Therefore, we took the approach to migrate the CI gradually as follows:

- Update CI script to choose CI platform (CircleCI / new secure CI) based on the service’s Terraform configuration

- If the service is marked as “ready to use secure CI”, use secure CI. Otherwise, keep using CircleCI

- In each service,

- Prepare required resources (e.g. new Terraform service accounts and permissions)

- If the service has Terraform configurations which was supposed to exist in another service (e.g. resources in different project), move it to the appropriate place

- Mark the service as "ready to use secure CI"

With this approach, we could migrate all services to secure CI one by one.

Status

We introduced this new CI system to our main Terraform monorepo, and other critical Terraform repositories in the last year. Not only Terraform repository, but we also introduced the same mechanism to our Kubernetes manifest monorepo, which manages all of our Kubernetes manifests and deploys them to our GKE clusters.

Conclusion

In this article, I explained the security problems we faced in our Terraform environment, and how we improved the situation by building a new CI system. I hope these ideas help the people who are managing Infrastructure as Code environment / supply chain and willing to improve its security.

Further Readings

- Presentation by @rung from Security Team: Attacking and Securing CI/CD Pipeline – Speaker Deck

- Presentation by @deeeet: How We Harden Platform Security at Mercari – Speaker Deck