I’m Kensei Nakada (@sanposhiho), an engineer of the Platform team at Mercari. I’m working on autoscaling/optimizing Kubernetes resources in the Platform team at Mercari, and I also participate in the development around SIG/Scheduling and SIG/Autoscaling at Kubernetes Upstream.

Mercari has a company-wide FinOps initiative, and we’re working on Kubernetes resource optimization actively.

At Mercari, the Platform team and the service development team have distinct responsibilities. The Platform team manages the basic infrastructure required to build services and provides abstracted configurations and tools to make them easy to work with. The service development team then builds the infrastructure according to the requirements of each service.

With a large number of services and teams, optimizing company-wide Kubernetes resources in such a situation presented many challenges.

This article describes how the Platform team at Mercari optimized Kubernetes resources so far, how we found it difficult to optimize them manually, and how we started to let Tortoise, an open source tool we released, optimize our resources.

Kubernetes resource optimization journey at Mercari

Kubernetes resource optimization has two perspectives:

- Node optimization: Instance rightsizing / Bin Packing to reduce unallocated resources in each Node. Change the machine type to an efficient / cheaper one.

- Pod optimization: Workload rightsizing to increase the resource utilization of each Pod.

For the former, the Platform can optimize by changing settings at the Kubernetes cluster level. The most recent example for this in Mercari was changing the Node instance type to T2D.

In contrast, the latter requires optimization at the pod level, requiring changes to Resource Request/Limit or adjustments to the autoscaler configuration in each service based on the characteristics of how resources are usually consumed in the service.

Resource optimization itself requires efficient use of resources without compromising service reliability, and such safe optimization often requires in-depth knowledge of Kubernetes.

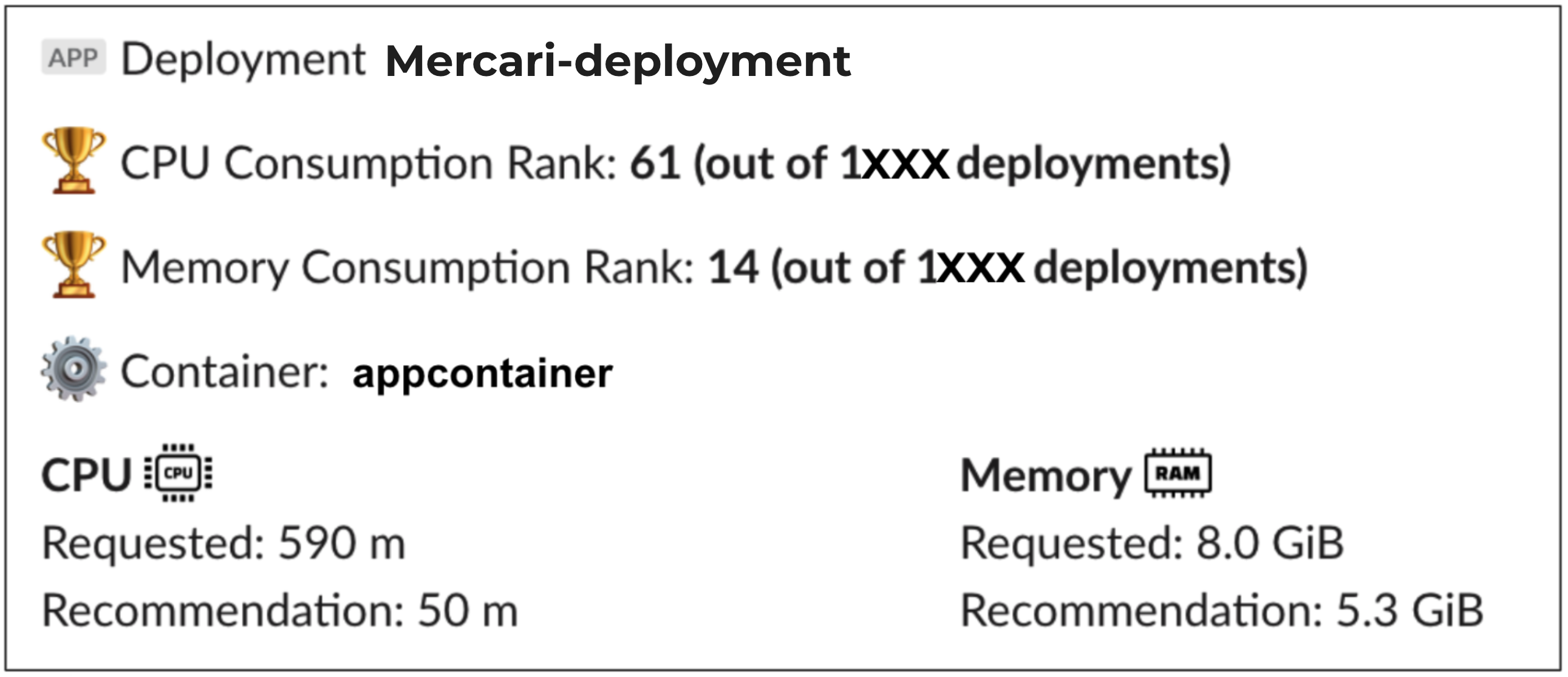

On the other hand, since Mercari adopts the microservices architecture, there are currently more than 1000 Deployments, and each microservice has its own development team.

In this situation, it is difficult to demand such in-depth knowledge from developers of all services, and there is also a limitation for the Platform team going around optimizing each individual service.

Therefore, the Platform team has provided tools and guidelines to simplify the optimization process as much as possible, and the development teams of each service have followed the guidelines to optimize Kubernetes resources across the company.

Kubernetes Autoscalers at Mercari

There are two official autoscalers provided by Kubernetes.

- Horizontal Pod Autoscaler (HPA): Increases or decreases the number of pods according to pod resource usage.

- Vertical Pod Autoscaler (VPA): Increases or decreases the amount of resources available to a Pod based on the Pod’s resource usage.

HPA is quite popular at Mercari, and almost all Deployments that are large enough to warrant its use are managed with HPA. In contrast, VPA is rarely used. HPA is most often configured to monitor the CPU usage, while Memory is managed manually in most cases.

To make the article easier to understand, we will give a light introduction to the HPA configuration.

HPA requires target resource utilizations (threshold) to be set for resources in each container. In the example below, the ideal utilization is defined as 60% for the CPU of the container named application. HPA adjusts the number of pods so that the resource utilization is close to 60%.

apiVersion: autoscaling/v2

kind: HorizontalPodAutoscaler

metadata:.

name: <HPA_NAME>

namespace: <NAMESPACE_NAME>

//...

metrics:

type: ContainerResource

containerResource:

name: cpu

container: application

target:

type: Utilization

averageUtilization: 60There are many other parameters available in HPA such as minReplicas which determines the minimum number of pods. Please refer to the official documentation for further details.

Resource Recommender Slack Bot

Mercari’s Platform team provides an internal tool called Resource Recommender for resource optimization purposes.This is a Slack Bot that calculates the optimal resource size (Resource Request) once a month and notifies every service development team. This is intended to simplify resource optimization.

Internally, it utilizes VPA: it calculates the best and safest values from the VPA recommendations of the past months.

However, we have some challenges with Resource Recommender.

The first challenge lies in the safety of recommended values. The recommended values start to get stale gradually after they are sent, and the accuracy of the recommended values fades away as time passes. Changes including application implementation changes, or changes in traffic patterns could result in the actual recommended values to change significantly compared when they were initially sent. Using outdated values could potentially lead to dangerous situations such as the application being OOMKilled in the worst case.

The second challenge is that service developers are not always willing to adopt these recommended values. Due to the possible issue with the automatically recommended values, developers need to carefully check if the values are really safe or not before applying them. They must also continue monitoring after applying these changes and make sure that there are no problems. This can take up a significant amount of engineers’ time in every team.

And the final challenge is that optimization never ends as long as the service keeps running. The recommended values will continue to change due to various changes in circumstances, Which means that developers have to continuously put effort tuning Kubernetes resources.

HPA optimization

Adding on the above issues, the most significant problem is the HPA.

In order to run your Pods with optimal resource utilization, you need to optimize HPA settings themselves instead of optimizing the size of your resource. However, Resource Recommender does not support the calculation of recommended values for HPA settings.

As mentioned earlier, Mercari has mostly HPAs for services of scale and they target CPU. It means that most of the CPUs used in the cluster cannot be optimized by Resource Recommender.

First, you have to consider increasing the target resource utilization (threshold) as high as possible, without hurting the reliability of services.

At the same time in reality there are many scenarios in which the actual resource utilization does not reach the target resource utilization (threshold) set in the HPA. In such cases you will have to adjust different parameters depending on which scenario your HPA is in.

HPA optimization is a very complex subject and requires in-depth knowledge to understand, so much so that it warrants its own article. Its complexity makes it difficult to work with from Resource Recommender. However it is not practical to expect all teams to regularly optimize resource utilization for a huge number of HPAs.

…At this point, we realized, "…it’s impossible, isn’t it?”

The fact is, with our current structure it requires all teams to go through complex optimizations manually in HPA or in Resource Request on a regular and perpetual basis.

Resource optimization with Tortoise

Thus we started to develop a fully managed autoscaling component, named Tortoise. It’s time to stop optimizing Kubernetes resources manually!

This Tortoise is not only cute but has been trained to do all the resource management and optimization for Kubernetes automatically.

Tortoise keeps track of past resource usage and the number of replicas in the past, and continues to optimize HPA and Resource Request/Limit based on those data. If you want to know what Tortoise does under its shell (pun intended), please refer to the documentation. You will understand that Tortoise is not just a wrapper for HPA and VPA.

Before developing Tortoise, the service development teams have been responsible for resource/HPA configuration and optimization. But now they can forget about resource management/optimization altogether.

If Tortoise fails to fully optimize any of the microservices, the responsibility to improve Tortoise to fit their use case falls in the Platform team’s hands.

As a resultTortoise allows us to completely shift those responsibilities from the service development teams to the Platform team (Tortoise).

Users configure Tortoise through CRD as follows:

apiVersion: autoscaling.mercari.com/v1beta3

kind: Tortoise

metadata:

name: lovely-tortoise

namespace: zoo

spec:

updateMode: Auto

targetRefs:

scaleTargetRef:

kind: Deployment

name: sampleTortoise is intentionally designed with a very simple user interface. Internally Tortoise automatically creates the necessary HPAs and VPAs and starts autoscaling/optimizing their workloads.

HPA exposes a significant number of parameters to be flexible enough to work with various use cases. But at the same time this same flexibility results in requiring users to have deep understanding and enough time to spend on tuning parameters.

Mercari is fortunate in that most of the services are written in Go and are gRPC/HTTP servers, as well as the fact that they are based on internal microservice templates. Therefore, the HPA configurations are actually very similar for most of the services, and the characteristics of the services, such as changes in resource usage and number of replicas, are also similar.

This allows us to hide a large number of HPA parameters behind Tortoise’s simple appearance and let Tortoise provide the same default values. Meanwhile, we can start optimizing through Tortoise’s internal recommendation logic. This approach has proven to be working pretty well for us.

Also, in contrast to the simple user interface (CRD), Tortoise has many settings for cluster administrators.

This allows the cluster administrator to manage the behavior of all Tortoises based on the behavior of the services in that cluster.

Safe migration and evaluation to Tortoise

As mentioned above, Tortoise is basically an alternative to HPA and VPA – creating Tortoise eliminates the need for HPA. There are, however, many Deployments in Mercari already working with HPA as mentioned above.

To migrate from HPA to Tortoise in this situation, we needed to safely perform complicated resource operations, from creating Tortoise to deleting HPA.

In order to make such a transition as simple and safe as possible, Tortoise has spec.targetRefs.horizontalPodAutoscalerName for smooth migration from an existing HPA.

apiVersion: autoscaling.mercari.com/v1beta3

kind: Tortoise

metadata:

name: lovely-tortoise

namespace: zoo

spec:

updateMode: Auto

targetRefs:

# By specifying an existing HPA, Tortoise will continue to optimize this HPA instead of creating a new one.

horizontalPodAutoscalerName: existing-hpa

scaleTargetRef:

kind: Deployment

name: sampleBy using horizontalPodAutoscalerName, it allows the existing HPAs to be seamlessly migrated to a Tortoise-managed HPA, hence lowering the cost of migration.

We are currently migrating many services to Tortoise in our development environment to evaluate Tortoise. Tortoise has an updateMode: Off for DryRun which allows us to validate the recommended values through the metrics exposed by the Tortoise Controller.

In the development environment, a significant number of services have already begun working with Tortoise in Off mode, and about 50 services have already begun using autoscaling with Tortoise.

We’re planning to roll it out to the production in the near future, and Tortoise will become even more sophisticated for sure!

Summary

This article described Mercari’s Kubernetes resource optimization efforts so far, the challenges we have seen, and how Tortoise, which was born out of these challenges, is trying to improve our Platform.

Mercari is looking for people to work with at Platform.

Would you like to work together to improve CI/CD, create various abstractions to improve developer experience… and breed tortoises? If you are interested, please check out our job description!