Hello, I am Toby Liu from the AI Team, Mercari Japan.

Mercari’s main mission is to create value in a global marketplace, where anyone can buy and sell.

On the marketplace, sellers and buyers hold discussions about the listed items, generating a huge amount of unstructured textual data. We believe that this data holds a significant amount of value, such as the user’s interests and preferences.

To attain a higher level of user satisfaction, Natural Language Processing (NLP) techniques, specifically affective studies (the processing of emotion from unstructured textual data) are crucial. These fields of research are on the cutting edge of NLP, and we would like to share our observations from the EMNLP’18 conference. We would like to showcase how these studies might be applied and embedded within the Mercari platform.

Organized by Association for Computational Linguistics (ACL), Conference on Empirical Methods in Natural Language Processing (EMNLP) is considered as one of the top tier academic conferences in NLP. EMNLP’18, covers a wide variety of topics and spans three days of main conference along with two days of tutorials and workshops at Brussels, Belgium. Comparing to other NLP conferences, it focuses on empirical methods and comprehensive comparisons. In order for papers to get accepted to this conference, a high level of research quality is required, as well as important real-world applications.

In this article, we examine NLP from two different perspectives; applications and techniques.

For applications, we are sharing researches regarding social applications. We will elaborate how applications like emotion analysis and gender studies can be infused with Mercari for a better user experience. In addition, one study coping with stereotypes in language models is shared to see how a model should be validated beyond metrics and performances.

As for techniques, pioneering modeling architectures are covered. These presented architectures are promising for their computational efficiency, which is what we really care of when pulling out models to production environment.

- Social Applications

- Gender Study: The Glass Ceiling in NLP

- Dangerous Stereotypes in Language Models

- Modeling Architectures

- Context-Sensitive Convolutional Filters

- Conclusion

- References

Social Applications

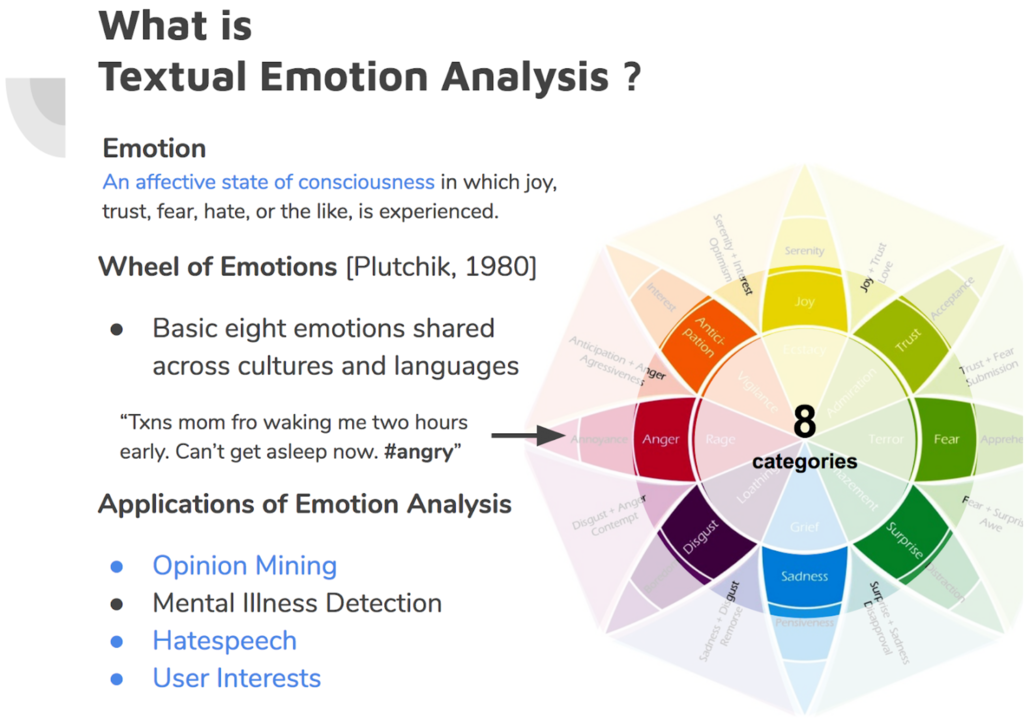

Emotion Analysis

Emotion analysis has always been a hot topic at the EMNLP conference. Textual emotion analysis attempts to predict one’s underlying emotion given a short textual input. In this year’s conference, my labmate and I presented a new framework for textual emotion analysis. We will briefly introduce it in the following section, since this is especially essential for e-commerce platforms. Opinions and interests are strongly correlated with the affective semantics of user’s comments and messages. In addition, based on textual emotion analysis, various affective studies are carried out in this year’s conference. Here we will share two of them.

My dear labmates at National Tsing Hua University (Taiwan) and I presented a novel approach for textual emotion analysis. Our work, “CARER”[10], is a new pattern-based extraction mechanism for effective affect representation. Trained with twitter dataset, it is adaptive to misspelled and rare words that are common on the social networks. CARER exhibits not only effectiveness in the state-of-the-art experimental result, but also its computational efficiency. In our extensive analysis, we also conducted a Mandarin Chinese Classifier to depict that the whole mechanism can work cross-lingually.

We are expecting this novel affect extraction mechanism can soon be migrated to Mercari. As much as we want to offer amazing shopping and selling experiences on Mercari, argument is always inevitably a common part of C2C marketplace. To better cope with arguments, emotion analysis is essential to grasp affect features and detect arguments. Respondents from customer support can thus prevent miscommunication before it happens.

Based on textual emotion analysis, two interesting extensive papers are presented in the conference. Ying Chen, et al. propose a hybrid classifier for both emotion classification and emotion cause detection[12]. Asides from detecting emotions, their emotion classifier can also infer causes of the emotion. An inference mechanism is hence embedded in their model architecture to indicate segments of sentence that implies predicted emotions. Cornelia Caragea, et al. investigate how we can comprehend personality of optimism and pessimism in a data-driven manner[11]. These studies further reveal how emotions are verbally expressed from the perspective of computational linguistics.

Gender Study: The Glass Ceiling in NLP

Diversity and inclusion are core values encoded in Mercari’s culture. In orientations for every new members of Mercari, we have sessions to bring up discussions over diversity and inclusion. A employee-driven channel is also set for ideas to better shape an open working environment. For us, cultivating corporate culture is far beyond statistics on gender ratio and races. Numbers only show a surface and neglect interactions within the diversity.

This is why the study “The Glass Ceiling in NLP” draws our attention. We want to know how we can assess robustness of gender diversity and inclusion in a community. The study presented here on EMNLP’18 tells a good story on the phonomenum behind gender ratio.

As an NLP researcher, Natalie Schluter examines gender diversity in the research community through a Mentor-Mentee network[1]. On average, the gender ratio of researchers in the field of NLP is 33% compared to 18% in Computer Science. That being said, the research, named as “The Glass Ceiling in NLP”, further found several gender imbalances in Mentor-Mentee relationships by analyzing researchers of dozen of published papers in NLP domain.

First, there is a growing gap between numbers of male and female mentors since 2005. A trend of a mentee tending to follow mentors of the same gender is also found. This reduces the possibility of a NLP study to include perspectives from different genders. In addition, the results also show that female researchers are expected to spend more time reaching a mentor status than male ones.

As we might think that 33% gender ratio is not a big gender gap compared to other fields, the gender ratio is just a number if we don’t dive into the problem and hit the invisible glass ceiling. This reminds us of not merely looking at the surface of the issues, regardless of either community of NLP, or the company. Truly embracing diversity and inclusion as part of the corporate culture can only be achieved if we study connections and bondings inside the whole picture.

Dangerous Stereotypes in Language Models

Debias Gender and Racial Discriminations

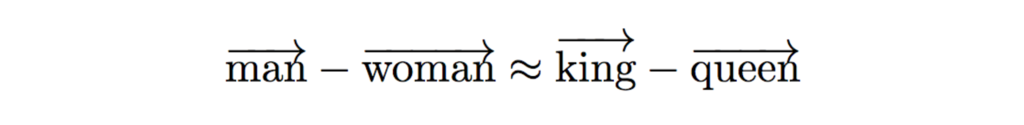

This approximation equation once excited the whole NLP community as Word2Vec[9] finally empowers word embeddings to effectively represent word’s semantic meanings through spatial distances.

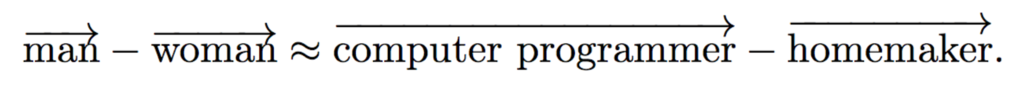

Unfortunately later gender stereotypes have also been found in the model as we implicitly expressed in training documents. For example,

The example here implies that a man is mostly related to computer programmer whereas a woman to homemaker. Previous study had addressed the gender-biased issue in word embedding; however, Ji Ho Park et al., further found that things can go even worse when it comes abusive language detection[2]. The detection model works in a very biased way to minority and is sensitive to some specific groups of people[4], neglecting entire contexts. For example,

f(“You are a good woman”) -> sexist

f(“I am a gay man”) -> highly abusive

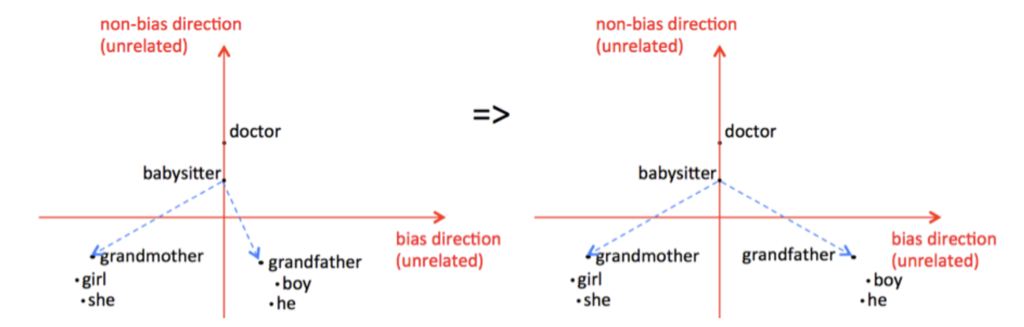

To overcome this issue, they firstly debias word embeddings by tuning gender-specific terms with neutral set of words, as proposed by Tolga Bolukbasi, et al.[3].

Furthermore, they back-propagate the detection model by swapping gender-specific terms in the training sentences. For example, a training result of f(“He is f**ked up”) is expected to be similar to which of f(“She is f**ked up”). As a result, these approaches successfully teach the model a good lesson on gender equality. Further detailed stories behind the study can refer to the author’s post. This is also a big lesson for us here at Mercari AI team. We don’t just verify our models on accuracies, but also investigate if our models are considering every specific scenario and context to retain a marketplace that includes every buyer and seller.

Modeling Architectures

At Mercari AI team, we are actively aligning with cutting-edge techniques to attain a balance between efficiency and precision. In this year’s EMNLP conference, we focus on modeling topics and share one interesting attention mechanism introduced to convolutional filters, as well as one novel implementation on RNN.

Context-Sensitive Convolutional Filters

CNN works efficiently with short texts in NLP modeling. Its convolutional filters grasp context features as and usually outperforms LSTM with encoders on limited size of dataset. Also, as CNN architecture works in a hierarchical manner, salience map can be helpful for error analysis.

Nonetheless, CNN does have drawbacks. Filters extracted in convolution layers are treated equally in the max-pooling stages, where a general global context is dropped. Let’s take a look at an example paragraph here.

As a human being, we first keep the first question “Why do we need attention mechanism in CNN models?” in mind when reading a passage. Extensive elaboration to the question is given in the second sentence. The third sentence gives a general answer to the first question in our mind. However, CNN works in a different manner.

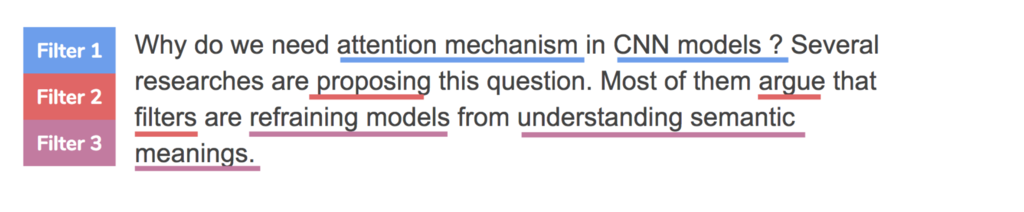

Imagining we are building a Question Answering language model here, the above diagram illustrates a salience map of filters before max-pooling layers. We have three filters in convolutional layers, and their underlined words indicates which the filter puts higher weights on. As the task is to answer the question proposed by the first sentence, features extracted by filter 3 in this context are critical for the model as an answer. However, since correlations between filter 1 and 3 are impeded to stand out in the following max-pooling layers if we take all filters equally in the example, the model is not able to function well in this task. Contexts are easily neglected in this case. Through the demonstration of how CNN works in convolutional layers, we can tell how it differs from the nature of human’s reading comprehension.

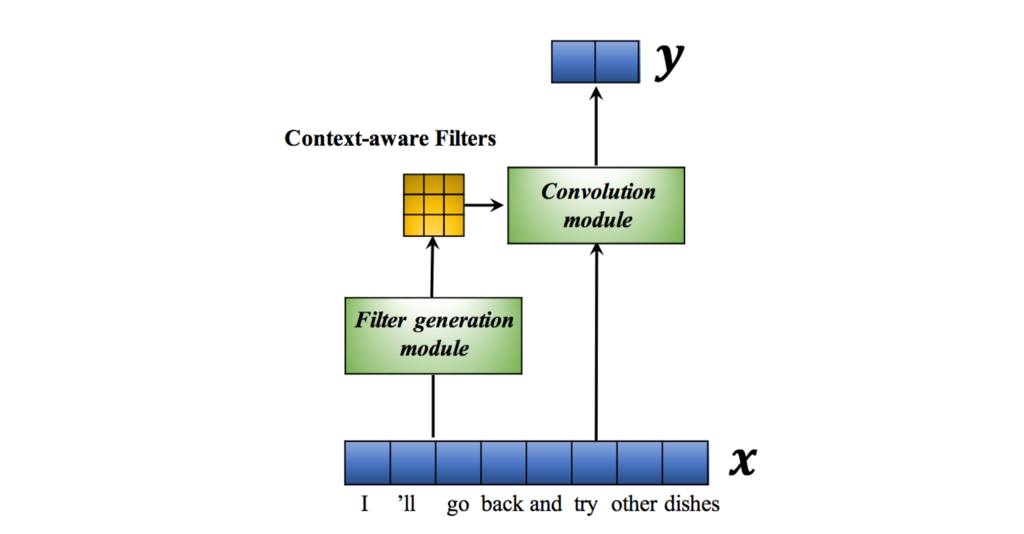

Dinghan Shen, et al. are hereby proposing a method called “Context-Sensitive Convolutional Filters”[6]. In this method, a dense layer is added for the model to learn weights of all convolutional filters. These so-called “Context-aware Filters” are later merged into convolution module, which equips CNN model with a mechanism of filter-wise attention. In the experiment of a context-dependent task like question answering, it is even more powerful by merging context-embedded representative layers.

Context-Sensitive Convolutional filters offers an alternative attention mechanism for CNN models. By considering correlations among filters, CNN learns contexts as how we read.

Novel Attempt on RNN: Layer-wise Dropouts

Extracted from generalized bidirectional language models, ELMo is a state-of-the-art contextual representation proposed by Matthew Peters et al.[7]. It draws a lot of attention when it firstly published on NAACL this year. Good results are obtained by tests and evaluations on major public NLP dataset.

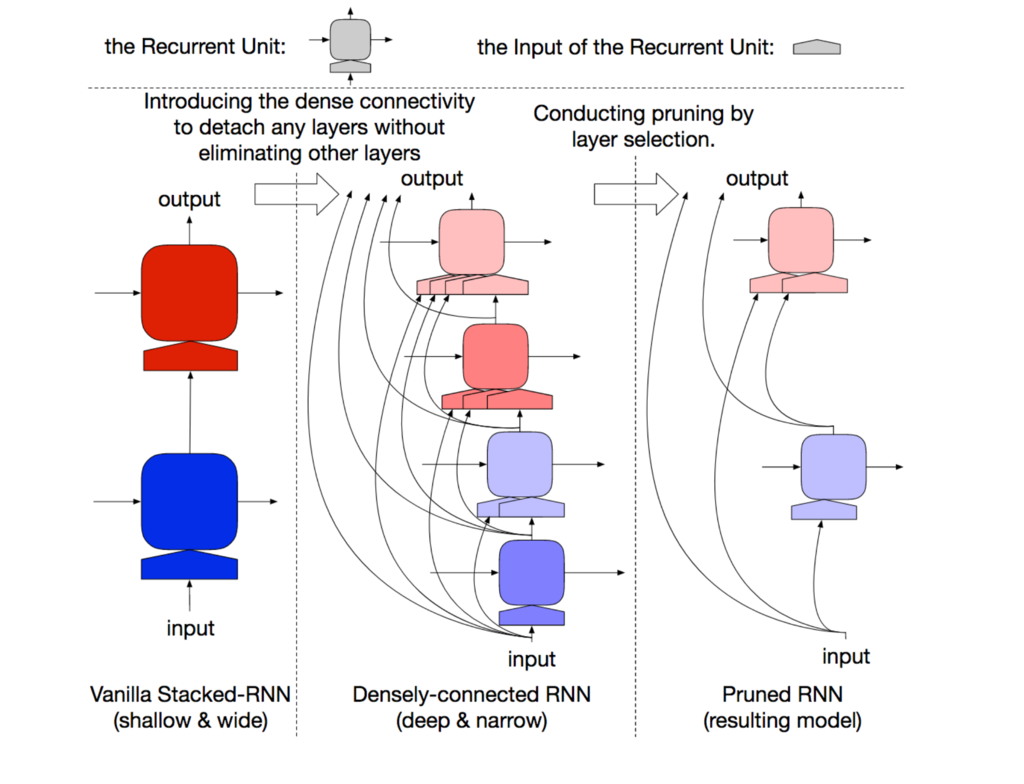

Backed by a bidirectional RNN with multiple attention mechanism, ELMo has a high degree of perplexity. Liyuan Liu, et al. hereby propose a new attempt on pruning models by layer-wise dropouts[8]. This is interesting because formly dropouts are mostly conducted element-wise.

Their result shows that, by layer-wise dropouts, ELMo with less perplexity and computation can still perform almost as good as the original architecture. The study inspires us to make new attempts when pulling out RNN-based models to production environments on Mercari platform. We are thrilled to further dive into the end-to-end impact of pruning layers and how it reacts to the final output.

Conclusion

In this article, we have reviewed a number of approaches to NLP, some of which profoundly change the field. Novel modeling architectures like Context-aware Convolutional Filters and Layer-wise Dropping RNN are giving us exciting experimental results. We are thrilled to seek out the possibility to improve our existing CNN models and to enhance the computational efficiency of RNN for a better production deployment.

As for the social applications of EMNLP’18, Emotional Analysis equips Mercari with capability to assist miscommunication in transactions proactively.

The Glass Ceiling in NLP and Gender Bias in Language Models depict stories beyond statistics and model performances. These studies of social applications are not merely technical, but are also serve as a practical reminder of how important diversity and inclusion is, regardless of the model, the community, or even the working environment.

There are other inspiring research in graph convolutional networks and cross-lingual topology alignments that we did not include in this article. These pioneering studies drive the AI team at Mercari to grasp implicit sentimental cues on the marketplace with cutting-edge NLP techniques. By doing this, we endeavor to provide a fantastic user experience where anybody can buy and sell items easily.

Are these studies as interesting for you as they are for us? Come join us, we’re hiring!!

References

- Schluter, Natalie. “The glass ceiling in NLP.” Proceedings of the 2018 Conference on Empirical Methods in Natural Language Processing. 2018.

- SemEval 19: http://alt.qcri.org/semeval2019/index.php?id=tasks

- Bolukbasi, Tolga, et al. “Man is to computer programmer as woman is to homemaker? debiasing word embeddings.” Advances in Neural Information Processing Systems. 2016.

- Park, Ji Ho, Jamin Shin, and Pascale Fung. “Reducing Gender Bias in Abusive Language Detection.” Proceedings of the 2018 Conference on Empirical Methods in Natural Language Processing. 2018.

- Mike Cavaioni. Deep Learning series: Sentiment Classification. https://medium.com/machine-learning-bites/deeplearning-series-sentiment-classification-d6fb07b0da43

- Shen, Dinghan, et al. “Learning Context-Sensitive Convolutional Filters for Text Processing.” arXiv preprint arXiv:1709.08294 (2017).

- Peters, Matthew, et al. “Deep Contextualized Word Representations.” Proceedings of the 2018 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 1 (Long Papers). Vol. 1. 2018.

- Liu, Liyuan, et al. “Efficient Contextualized Representation: Language Model Pruning for Sequence Labeling.” arXiv preprint arXiv:1804.07827 (2018).

- Mikolov, Tomas, Quoc V. Le, and Ilya Sutskever. “Exploiting similarities among languages for machine translation.” arXiv preprint arXiv:1309.4168 (2013).

- Saravia, Elvis, et al. “CARER: Contextualized Affect Representations for Emotion Recognition.” Proceedings of the 2018 Conference on Empirical Methods in Natural Language Processing. 2018.

- Caragea, Cornelia, Liviu P. Dinu, and Bogdan Dumitru. “Exploring Optimism and Pessimism in Twitter Using Deep Learning.” Proceedings of the 2018 Conference on Empirical Methods in Natural Language Processing. 2018.

- Chen, Ying, et al. “Joint Learning for Emotion Classification and Emotion Cause Detection.” Proceedings of the 2018 Conference on Empirical Methods in Natural Language Processing. 2018.