Hi everyone! I am Rakesh from the Mercari’s Seller Engagement team. Recently I had the opportunity to mentor an Intern at Mercari. His name is Priyansh and this article summarizes part of his work on using Firebase for client side machine learning models.

Introduction

The availability of huge data, advances in powerful processing and storage, and the increasing demand for automation and data-driven decision making has led to the widespread popularity of machine learning today.

Many companies want to use machine learning but it can be very expensive, the cost associated with developing, training and deploying can be significant, as well as the cost of using specialized hardware like GPUs, cloud computing resources, storage and networking.

Traditionally machine learning models are deployed on the server side but the popularity to enable client side machine learning is also increasing. There are many advantages to using AI on the edge.

- No internet connection required.

- Do not need to send data to the server.

- Real time inference, with extremely low latency.

- Little to no server cost.

Why we wanted to use ML on client side

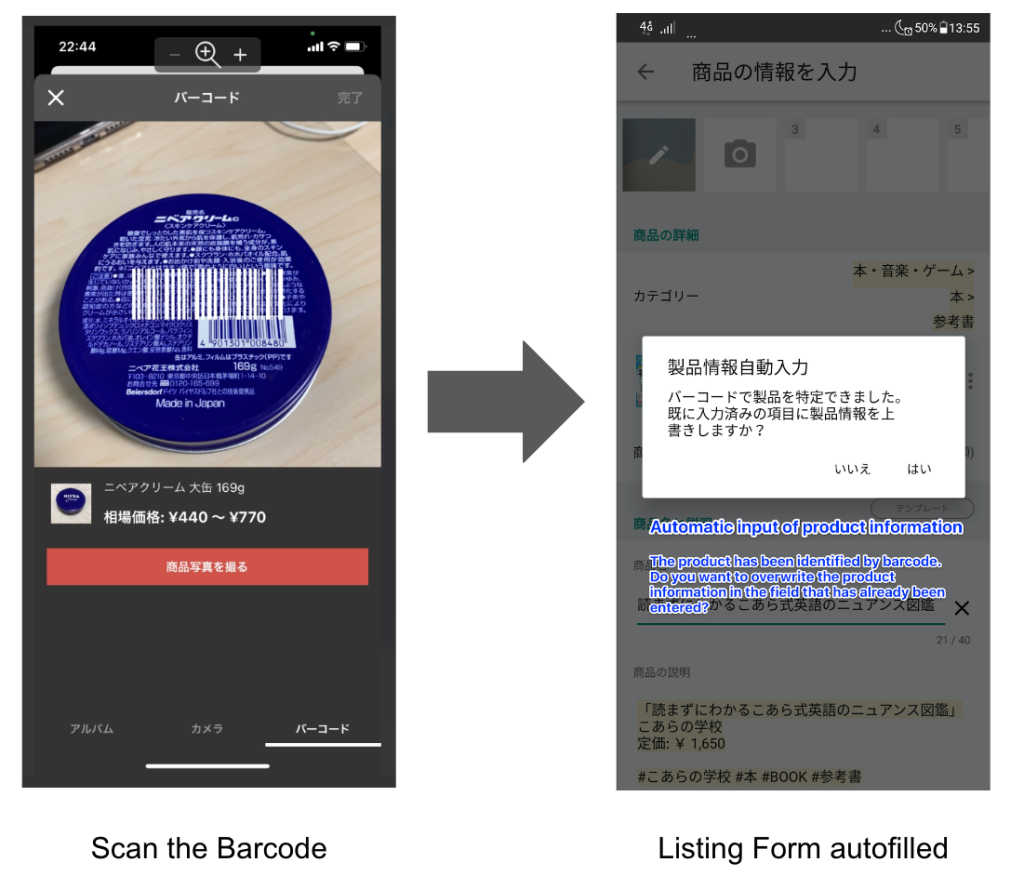

We at mercari are always trying to increase the value in our marketplace by building many innovative features. Barcode listing is one such powerful feature which makes sellers list easily by scanning the barcode of certain categories of items.

Sometimes users, especially new users are not fully aware of all our features and barcode listing is such a feature. To address this and to improve the customer experience, we wanted to use Edge AI. So, we built a new feature called listing dispatcher, which uses an ML model on client side to predict if the category of item being listed by the seller supports barcode listing or not using the item’s first photo.

How to use ML on client devices?

We can use one of the popular open source mobile libraries called TensorFlow Lite (in short also called TFLite) for client side machine learning inferences. It has many key features like

- Optimized for on-device machine learning.

- Supports multiple platforms.

- Support across many languages like Java, Swift, etc.

- High performance.

- Support many common machine learning tasks like image classification, object detection, etc.

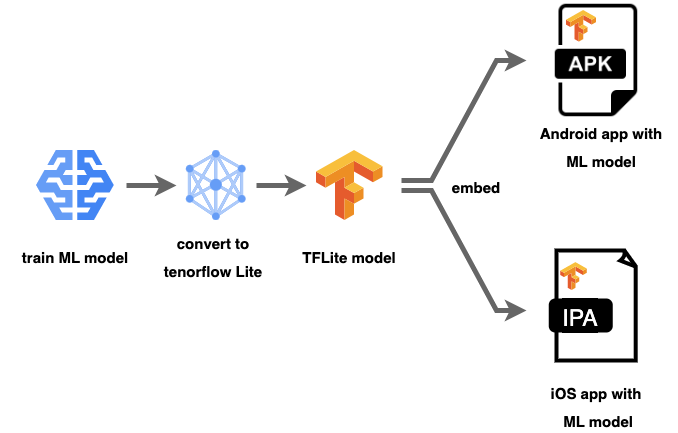

We can simply embed the TensorFlow Lite model to an android or iOS app while packaging it to apk or ipa. This is one of the easiest ways to use ML on the client side but it has certain limitations.

- ML models are usually huge and packaging it with the app will increase the app size and can lead to drop in installations on iOS app store or Android play store.

- An additional overhead to optimize the ML model for compression, for which we may need to compromise with model accuracy.

- It can also affect the development flow of the client side.

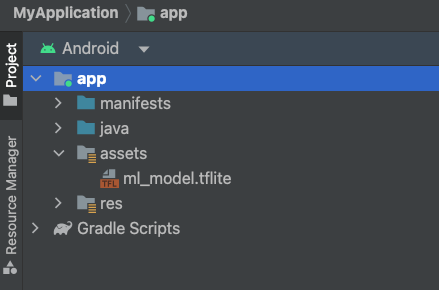

Embedding the tflite model on Android by adding it to app assets

Is there a better way?…

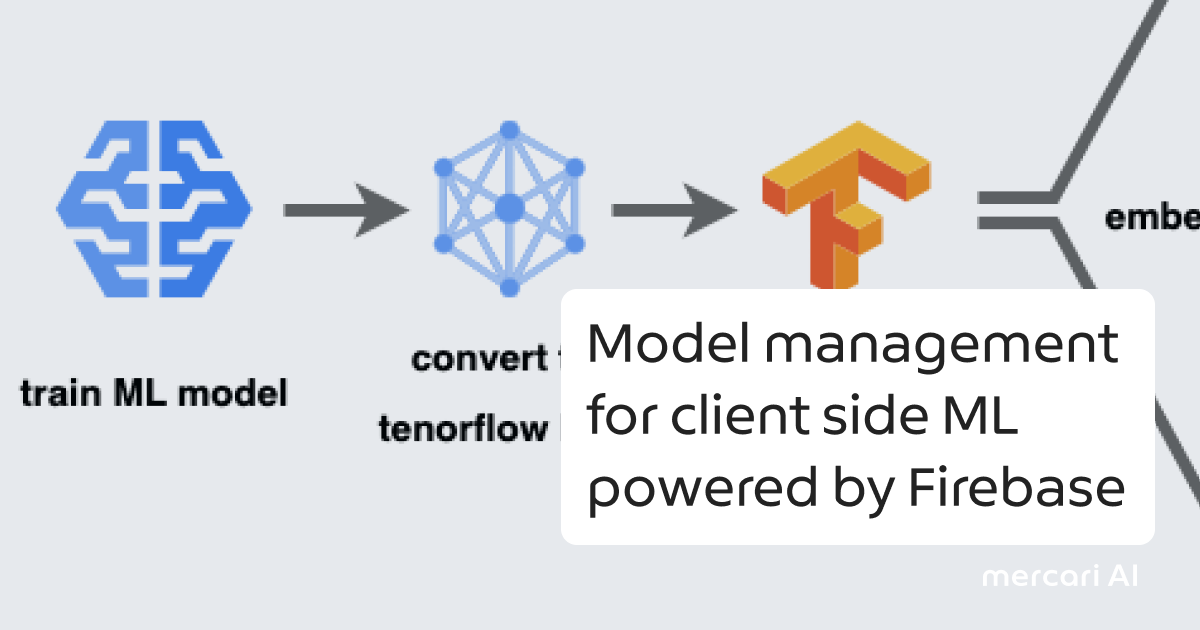

Firebase Machine Learning Custom Model

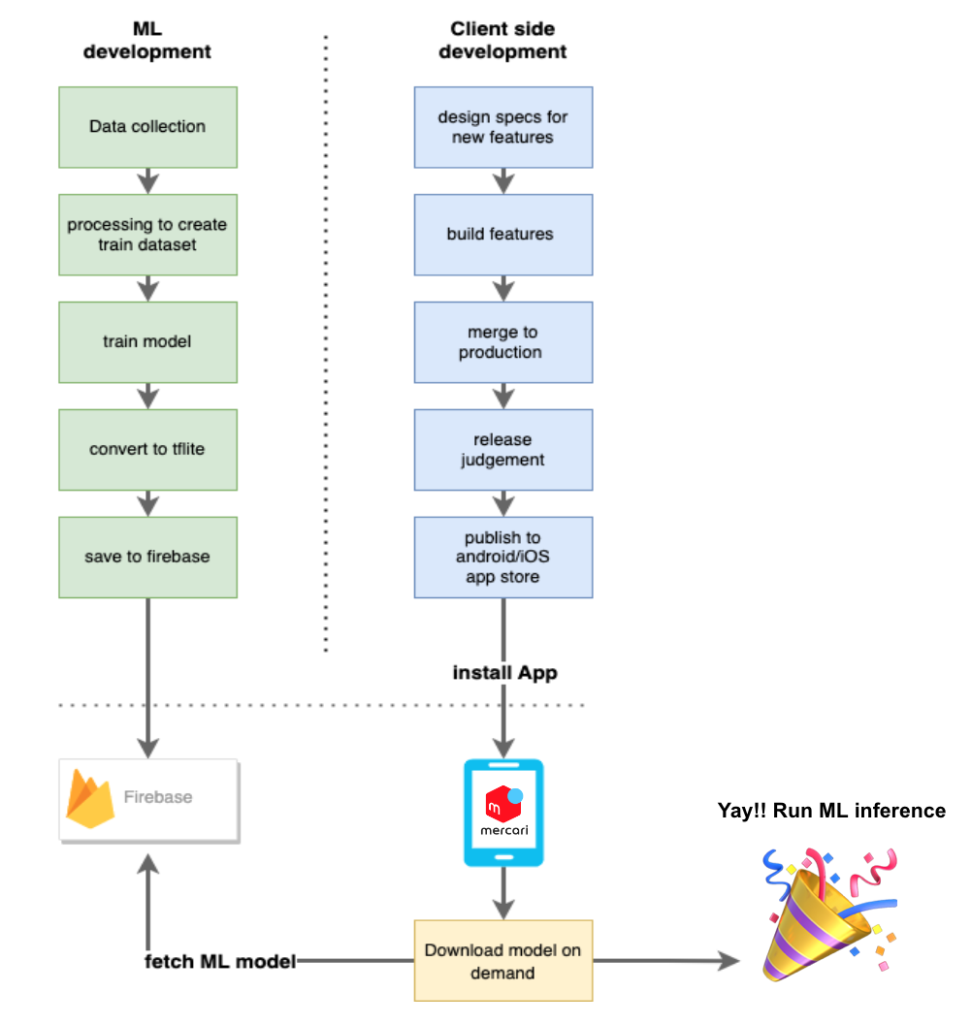

It is one of the services of Firebase which enables you to deploy your own custom ML model on edge devices. We don’t need to package the app with ML model, but instead the client will download the ML model after installation in background on demand from remote only once and reuse it for subsequent inferences.

There are many advantages to using this method

- ML models are hosted on Firebase.

- Helps in version control of ML models and ML model management.

- Decoupling of client side development flow and ML development flow.

- Ensuring that the app always uses the latest version of ML model.

- Can configure conditions on when to download the latest model.

- A/B test two versions of a model

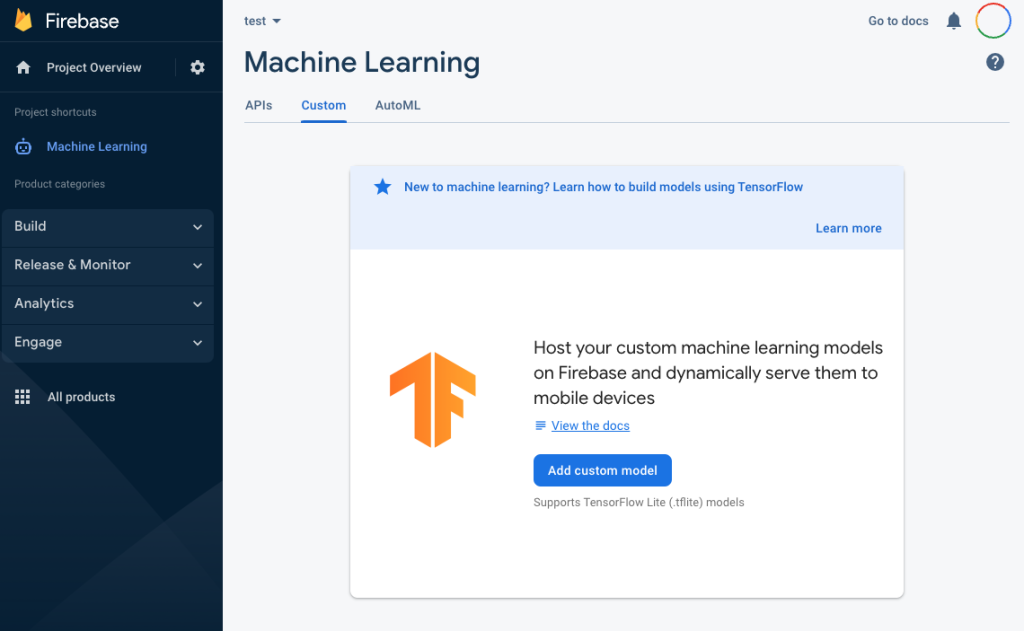

Firebase console to use custom machine learning

Even though using Firebase dynamic model loading has many advantages, We need to be mindful of the following

- Error handling – Fallback to default model or disable ML feature, if a new version of ML model has errors.

- When should we download the model? For example download only when the user is connected to a wifi etc.

Conclusion

ML is very useful in a wide range of use cases like object detection, barcode scanning etc. There are various challenges to using ML on the client side but Firebase Custom Model has made it so easy with APIs to fetch ML models from remote, model management, faster experimentation and ability for developers to customize configurations.