This article is part of the Developer Productivity Engineering Camp blog series, brought to you by @hatappi from the Microservices Network team.

Introduction

Microservices Network team maintains Istio as one of the components.

At Mercari, we have started a project to replace existing systems with microservices since 2017, and the number of microservices is still increasing. We have introduced Istio, one of the Service Mesh implementations, to address the issues that arise as the number of microservices increases.

This article will show you what the problem is and how Istio solves it.

Network problems in Microservice Architecture

When adopting Microservice Architecture, there are network problems that need to be resolved compared to when adopting Monolithic Architecture. Let’s see an example.

Let’s say we adopt Monolithic Architecture and create the following web application. When the application receives the request from the user, it gets information from the datastore and returns a response to the user. The application makes a request to the datastore, but all other processes are done only inside the Application.

On the other hand, when we adopt Microservice Architecture, multiple services communicate via the network and return a response to a request as shown in the figure below. Therefore, we need to consider Service Discovery, Reliability such as Timeout, Retry, etc., that we did not need to consider in one application. If we have a few services, we can handle them individually, but as the number of services increases to hundreds or thousands, it becomes more complex and more difficult.

Ideally, the developers of each service should focus on implementing the business logic, and problems specific to Microservice Architecture should be solved by another layer or component. One way to do this is with a service mesh.

What is a service mesh?

A service mesh is a "dedicated infrastructure layer responsible for communication between services." The service mesh relies on a network proxy mesh, which is composed of sidecar proxies deployed along with each service. These proxies provide features such as Service Discovery, Security, and Observability, allowing us to decouple these from the application code.

One of the service mesh implementations is Istio, which we are using.

What is Istio?

Istio consists of two components; the data plane, and the control plane.

Data plane: Envoy proxy is deployed along with the application using sidecar pattern. And Envoy proxy intercepts all incoming and outgoing traffics to provide the feature for communication between services. If you want to know how Envoy Proxy intercepts incoming traffic, please read my previous article.

Control Plane: It manages the configurations and receives the metrics from all Envoy proxies in the data plane (Envoy proxy) and dynamically configures them via an API provided by Envoy proxy. It has the merit of no need to restart the data plane when making a change.

Problems that Istio solves

Let’s see the couple of problems that Istio solves. This is not exhaustive so please refer to the Istio official documentation for other problems.

Traffic Control

If we want to do a flexible routing such as A/B testing and weighted routing, it is difficult to realize that using only the Service resource. For example, when considering the A/B testing, one of the approaches is to first deploy each version of the application as a Pod, create a Service resource for each Pod, and register each Service resource at the caller. However, this approach is not realistic for Microservice Architecture, which has hundreds of services, because all callers must be changed in order to change the A/B test.

Istio can dynamically change the routing information via the API provided by the data plane (Envoy proxy), which enables flexible service discovery. For example, if we want to proxy 70% of requests to version A and 30% of requests to version B, we can define Istio’s VirtualService as shown below. This definition is passed to the caller’s Envoy proxy via the control plane. And since the Envoy proxy switches the destination according to the ratio, the caller does not need to be aware of how to switch destinations.

apiVersion: networking.istio.io/v1beta1

kind: VirtualService

...

spec:

hosts:

- http-server

http:

- route:

- destination:

host: http-server

subset: version-a

weight: 70

- destination:

host: http-server

subset: version-b

weight: 30Reliability

Let’s say there are the following dependencies among services.

What are the problems happening from a Reliability perspective?

Let’s suppose that Service-D slows down. In this case, the caller (Service-C) also slows down, as well as the caller (Service-A, B) of Service-C. This phenomenon is called cascading failure. A solution to this problem is implementing a circuit breaker in Service-D. It temporarily blocks requests from Service-C to Service-D and prevents the propagation of failures.

In our next example, let’s suppose that Service-A makes huge requests to Service-C due to a bug.

If Service-C doesn’t expect that amount of requests, it may not handle all of them. To avoid this situation, we can consider implementing rate limiting to protect the service from the unexpected amount of requests.

It is also important to consider implementing appropriate timeouts and retries in the caller.

Do we implement those features in our application code? This is technically possible but not practical to implement them in the same way for hundreds of services. By creating a library and using it, applications can avoid implementing them from scratch for each service. However, implementation is required for each language used by the service. Also, if we make changes in the library, we need to update all services.

The Envoy proxy provides the following features and we can use them independently of the language or framework used by our application. On top of that, the change is done between the data plane and control plane via the Envoy proxy API, so there is no need to update the application itself.

Security

Basically, if we expose the Service to the Internet, we use HTTPS. How about the communication between services in Kubernetes cluster? If we use plain-text HTTP, when attackers break into the cluster (i.e. when a library has vulnerabilities), it can snoop on communications between services. To avoid that, we should also use HTTPS for service-to-service communications.

To realize HTTPS communication between the services, it’s necessary to manage certificates and private keys in each service. However, HTTPS communication may not be performed due to incorrect settings, such as the certificate not being renewed, hence not realistic to manage each service individually.

Istio supports Mutual TLS authentication (a.k.a mTLS), which allows TLS certificates and keys to be passed between the data plane and the control plane. mTLS also transparently encrypts and decrypts the communication, removing the need for the application to handle HTTPS.

Another aspect is access control. For example, when Service-A only allows requests from Service-B, We can use NetworkPolicies in Kubernetes case. NetworkPolicies are an access control implementation based on OSI layer 3/4. Since the HTTP protocol is operating at layer 7, path-based access control (i.e. Service-A only allows access to Service-B and C respectively on the /foo and /bar paths) cannot be done with NetworkPolicies.

Istio can do that with an AuthorizationPolicy. It can also be used with mTLS for more flexible control, such as allowing only requests from pods with a specific service account.

apiVersion: security.istio.io/v1beta1

kind: AuthorizationPolicy

...

spec:

action: ALLOW

rules:

- from:

- source:

namespaces: ["service-b"]

to:

- operation:

methods: ["GET"]

paths: ["/foo"]

- from:

- source:

namespaces: ["service-c"]

to:

- operation:

methods: ["GET"]

paths: ["/bar"]Mercari-specific problems we are currently working on

From here, I will explain the problems that are actually solved using Istio in Mercari.

gRPC Load Balancing

In Mercari, gRPC is basically used for communication between services. HTTP/2 is used for gRPC, but Kubernetes currently only provides Service resources (L4 Load Balancer), so gRPC communicating over HTTP/2 cannot do Load Balancing. One solution is to use Headless Services to fetch the IP address of the destination pod on the client side for Client-side load balancing.

In Mercari, Go is basically used for creating services so we have an internal package to perform Client-side load balancing. All services use it but there are two problems:

- When we develop the application, we need to remember to introduce this package. If we forget that, the request may not balance well. As a result, an unintentional incident may occur.

- There is no support for services created using other programming languages so developers need to consider how to realize Client-side load balancing.

In particular, the second one is an important point for enabling Polyglot Programming in microservices.

Since Istio supports HTTP/2 load balancing, the service can realize gRPC load balancing simply by using Istio. Also, it is done natively by the Envoy proxy, making it language-agnostic.

Istio Ingress Gateway

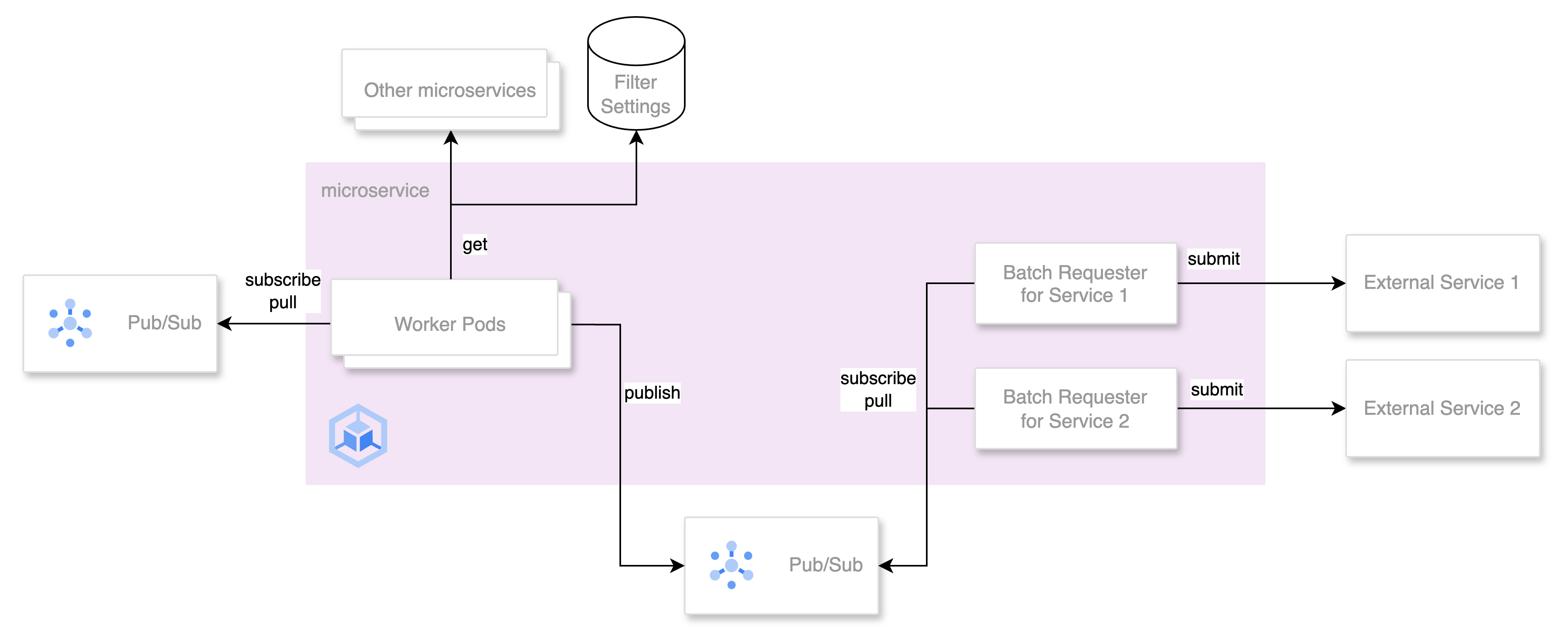

In Mercari, we adopt the Strangler Fig pattern using API Gateway implemented in Go to migrate to microservices.

When developers want to expose/change their service, they open a Pull Request (PR). As the number of microservices increases, the workload of the team maintaining the API Gateway also increases due to reviews and releases.

Ideally, each service should own the routing information, and the network team manages the application handling the traffics and common functions such as logging and authentication. This will allow the developer to change their endpoint anytime.

We are currently considering the introduction of Istio Ingress Gateway as one of the solutions.

The Istio Ingress Gateway is actually an Envoy proxy. It communicates with Istiod, which is the same control plane as the Envoy Proxy deployed with the application. Information on what endpoints to expose and which services to receive requests to is defined using Istio’s CustomResourceDefinitions (CRDs) Gateway and VirtualService. Istiod collects information from these CRDs and passes it to the Istio Ingress Gateway.

Additionally, since each resource can be stored in the namespace of each service, the endpoints and routing information are managed by the owner of each service, and the network team can manage Pod and common features such as logging, and authentication, allowing us to separate responsibilities!

Providing features using Istio

We also combine low-level Istio features to make higher-level features with more abstraction.

For example, Canary Release using Istio’s Traffic Management. Istio allows weighted routing by using VirtualService and we can combine it with DestinationRule to gradually shift traffic to newer versions of the application.

We also provide a PR environment using Istio’s Traffic Management. This will be detailed in an upcoming article next week so check it out if you’re interested.

Use Istio without being aware of Istio

So far, I have introduced problems that Istio solves. To solve these problems, however, the developer needs to define Istio CRDs such as VirtualService and Gateway. In other words, the developer needs to have knowledge of Istio. Is it an ideal situation? Not quite… The ideal situation is to enable the developer to use Istio without being aware of Istio in basic use cases.

For example, when a developer wants to use a retry, they make only a declaration to retry x times, rather than creating VirtualService from scratch. To realize that, we have started considering the use of CUE explained on this great article which is also a part of this blog series.

We hope that using CUE will allow developers to use Istio low-level features along with our higher-level features without being aware of Istio.

Conclusion

In this article, we explained what problems Istio solves.

The network team covers a wide range of north-south, east-west components such as CDN and DataCenter as well as Istio. I think it’s exciting to think about how to use these to solve problems that occur in Mercari, so if you are interested, please apply from the link below!