This post is for Day 07 of Mercari Advent Calendar 2021, brought to you by @primaprashant from the Mercari AI-CRE team.

Mercari has customer support offices in Tokyo, Sendai, and Fukuoka. We have dedicated agents to provide support and help customers resolve issues they encounter. Quick resolution of customer issues is essential for providing a delightful user experience.

Our team developed a feature that helps customer support (CS) agents to quickly reply to customer inquiries. Before I can describe the feature, some background into the CS tools and operation is required. Let’s jump into it right away!

Contact Tool

Contact tool is an internal tool used by the CS agents to reply to customer inquiries. It was designed to meet the various needs of CS agents and provides various functionalities to simplify the operation. It also allows a customer to send multiple messages in a single inquiry and provides a chat-like interface. More details about the contact tool are out of scope for this post, but hopefully, we will cover them in a later post.

Before we proceed further, we need to learn certain terminologies and how a typical inquiry is handled.

Interaction between the customer and the CS agent

Fig 1: Entities involved in an inquiry.

- If a customer is facing some problem, they can send an inquiry. When the customer sends the first message, a new

Caseis created. The purpose of a case is to provide resolution for a single problem. If customers are facing multiple problems, they will create multiple cases. - Customers can send multiple messages in a case. Each message in a case is called a

Contact. - Each case has an associated

Skill. A case can have one and only one skill associated with it. The skill of the case is used to identify the kind of problem that the customer is facing. For example, it could be about shipping, payments, or login issues. We have hundreds of different skills, from which a single one is assigned to a case. How skill is assigned to a case is outside the scope of this blog. All you need to understand for now is that a case has a skill associated with it. - Each CS agent has multiple skills associated with them. This means each CS agent can only assist with certain types of problems and not all. A CS agent can potentially handle a case if they have the skill that is associated with the case.

- After a new case is created, a skill is assigned to it. After that, the case is assigned to one of the agents who have the skill that is present in the case.

- To send a response to the customers, agents don’t have to write down the replies every time. We have a few thousand templates that can be used. A template contains a response that could be used in a very specific scenario. Agents can select one of these templates to send the response. They can also modify the response present in the template before sending the final response if required.

- Each message sent by the agent to the customer in a case is called a

Reply.

Now that we understand how a typical inquiry is handled, let’s summarize the entire process.

- When the customer sends the first message for a problem, a new case is created.

- A skill is assigned to the case, and the case is assigned to one of the agents who also have that skill.

- The agent selects a template from a list of templates and sends a reply to the customer.

The feature we developed helps the agents in selecting a suitable template for the reply.

Hypothesis for providing suggestions

We have understood how a typical inquiry is handled. But there is a problem! There are a large number of templates to choose from!

Since there are a large number of templates, finding a suitable template can be difficult and time-consuming. So, we wanted to develop a feature to provide 3 templates as a suggestion for each case that the agents can potentially use to reply to the customer.

Remember how every case has an associated skill. Similar to the Pareto principle or 80/20 rule, our intuition was that only a small number of templates were used most frequently for each skill. In other words, we thought that for certain types of problems, agents used certain templates more frequently.

Hypothesis: For each skill, a small number of templates are used very often.

Now, if we could verify that our hypothesis was true, we could use it to provide template suggestions for cases. How?

Assuming our hypothesis is correct, we can check the past data, say all the cases in the last 3 months. We can find out what were the most frequently used templates for each skill and store the 3 most frequently used templates for each skill. For a new case, we can check what is the skill associated with it and then check our stored results to find the most frequently used templates for that skill. We could provide those 3 templates as a suggestion to the agents. Pretty neat, right!

All that remained was validating our hypothesis!

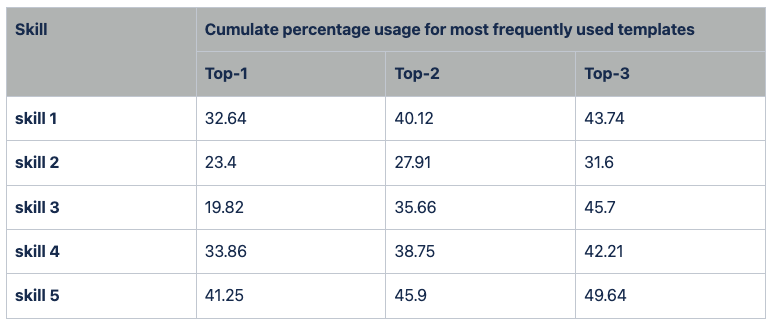

We went digging through the data. Table 1 contains the results for the top 5 skills ordered by the number of cases in which the skill was present. I have changed the names of the skill.

Table 1: Frequency of usage of top templates by skill.

Table 1: For the top 5 skills, the table shows how frequently replies are sent using one the top 3 most frequently used templates for that skill. For example, out of all cases which have skill 5, 41.25% of the time the reply is sent using the most frequently used template, 45.9% of the time using one of the top 2 most frequently used templates and 49.64% of the time using one of the top 3 most frequently used templates.

From the table, you can observe that for the top 5 skills, the 3 templates were used more than 40% of the time. We calculated the weighted average (weighted by how many cases had that skill compared to the total number of cases), and found that about 45% time, the replies made by the agents were from the top 3 templates for that skill.

This seemed to validate our hypothesis. We decided that this was a good enough starting point and we wanted to implement this system. If we decide to build a more intelligent system to provide suggestions, this will also act as a baseline.

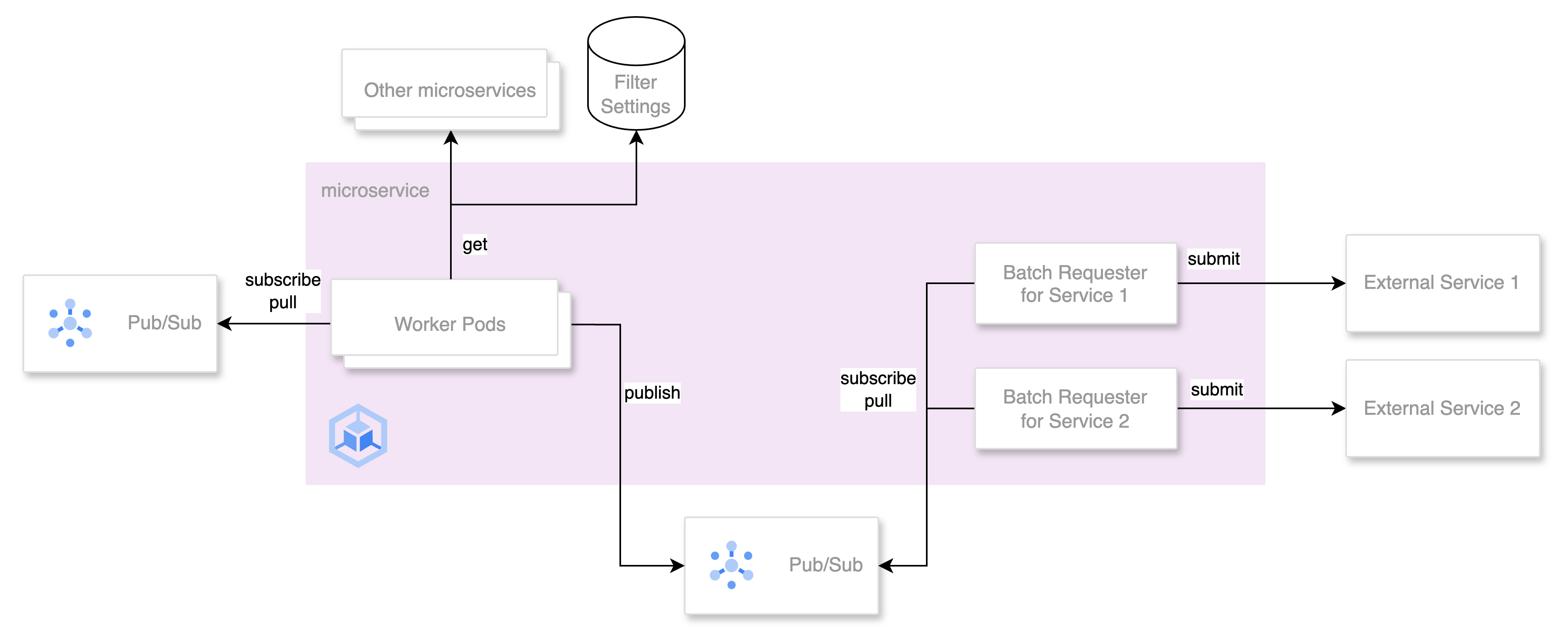

Implementation of template suggestion service

Based on the explanation in the previous section, our service to provide suggestions (template suggestion service) is supposed to work in the following way:

- receive a request to provide suggestions for a new case

- check the skill of the case

- check the last 3 months data to find out the most frequently used templates for that skill

- return the top 3 templates as suggestions

There is one problem though. For every request, we need to run a heavy query that processes a very large number of rows in the database. This is not very good for performance.

I would like to point out an insight now. The 3 most frequently used templates for each skill don’t change frequently. They don’t change every minute or hour. Since we are considering the last 3 months’ data, the change occurs over a matter of multiple days. So, it is not necessary to query the database for every request.

Based on this insight, we made the following decisions for the implementation:

- Instead of querying the database for the 3 most frequently used templates for a skill, we wrote a query that returns the 3 most frequently used templates for ALL skills.

- We cache the results of the query so that we don’t have to query the database for each new request.

The caching of the query result works as follows. We implemented a method get_suggested_templates_for_each_skill.

@functools.lru_cache(maxsize=1)

def get_suggested_templates_for_each_skill(

start_date: str, end_date: str

) -> Dict[int, List[int]]:The input parameters start_date and end_date are used in the query. We analyze the data between the start date and the end date to find the most frequently used templates for all skills. After getting back the results from the database, we convert it into a dictionary where the keys are the skills and values are the list of the 3 most frequently used templates for each skill. The result of this method is cached based on the input parameters. This is achieved by adding the decorator lru_cache from the functools package.

Since the results are cached based on the date, the suggestions are updated once every day. For the first request of the day, the query is sent to the database and the results are cached. For the rest of the requests on the same day, the cached results are used. This way, we prevent database query for each request.

So, to summarize, our template suggestion service works in the following way:

- receive a request to provide suggestions for a new case

- check the skill of the case

- get the dictionary containing suggested templates for each skill either from the cached results or by querying the database

- get the top 3 templates for the skill from the dictionary and return them as suggestions

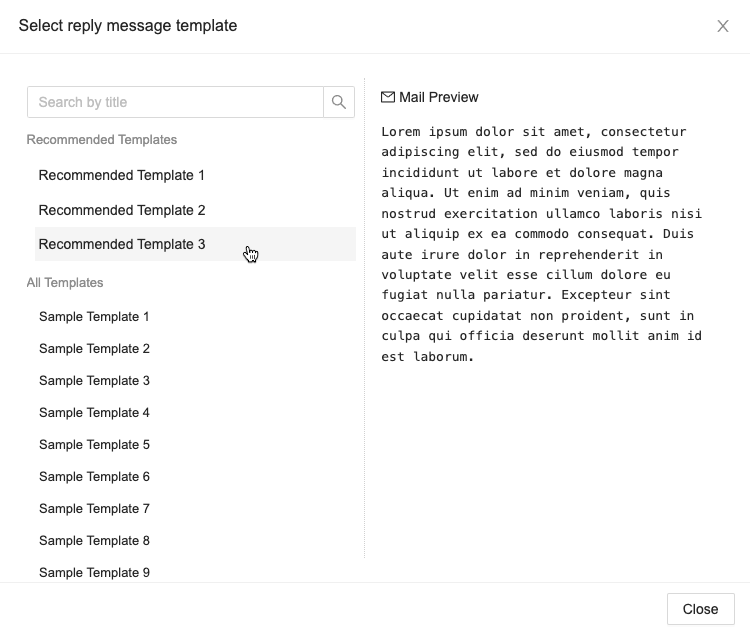

The image attached below shows the dialog box in the contact tool through which agents can select the suitable template for the reply. I have taken the liberty to change the actual template names and their contents. As you can see, the 3 suggestions for the case are displayed at the top.

Fig 2: Template selection dialog box in the contact-tool.

A/B test

The main goal of developing this feature to provide template suggestions is to reduce the average handling time (AHT). Handling time is the time starting from the moment when an agent is assigned a case to the moment the agent sends the reply. The AHT metric has a large impact on customer support operations and decisions. It helps decide the number of CS agents we need to employ and so consequently, the budget required for CS operations. Lower AHT also helps in making sure the service level for customer inquiries is maintained.

After we finished the implementation, we ran an A/B test to detect if the feature was actually contributing towards reducing the AHT.

We ran the A/B test for a week. We detected a 2.2% reduction in AHT, after which, we decided to release it 100% in the production environment.

Conclusion

We learned about the basics of customer operations and how a typical inquiry is handled. We learned about a system to provide template suggestions for quicker response and looked into its implementation details.

Future plans

- The suggestions we are providing are based on the past behavior of agents. There is a limitation on the accuracy that we can achieve in this way. From the results in table 1, you should be able to see that, it is close to 40%. This was also confirmed by the online results. Since the top 3 templates are only used for about 45% of cases, we need to build a better system to provide correct suggestions to more cases and to improve the accuracy. This is what we will focus on next. Our current system will act as a baseline for future improvements.

- Remember how we skipped over the issue of how a skill is assigned to a case. Well, we have a system now, but it’s not perfect. Incorrect skills are often attached to the case. We also have plans to improve that system.

ML and NLP are most definitely going to play a big role in both of these improvements.

Look forward to the tomorrow’s article by @afroscript.