Hello, I am Kumar Abhinav from the Machine Learning Team at Mercari Inc. Japan.

My team at Mercari is working on developing a Machine Learning system to model customer’s profiles, attributes, and behaviors. Mercari always tries to improve the experience for customers and our team helps provide target customer segments for different initiatives.

With the increase in the business requirements for new profiling models, comes the need to develop a general platform to host different machine learning models. In this article, I would like to share the development of a general customer profiling platform to host machine learning models.

Background

In Mercari, multiple campaigns are running to provide incentives or coupons to its customers. Multiple teams develop campaign-specific customer profiling models based on understanding customer insights to solve specific campaign needs. Also, each team needs to implement a system to run the developed model which incurs system and human resource costs. This calls for a centralized customer profiling service in Mercari.

To support our Mission, where every team can host any customer profiling models on a centralized system, our team started designing a general platform.

Overview of System Architecture

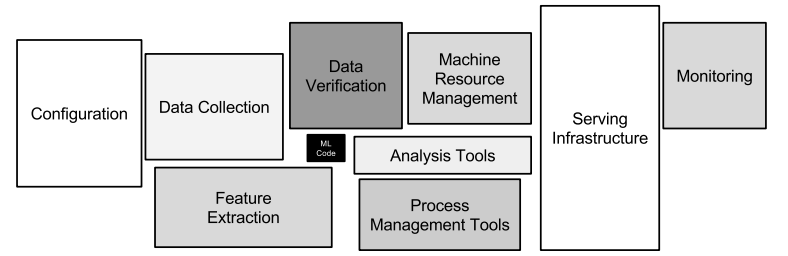

Developing a machine learning-based service not only requires creating a model but also creating a system to serve the model, monitor the system, and analyze model performance. The various components of a machine learning system are correctly described in Fig 1.

The aim of designing the profiling platform is that different teams can easily integrate and host their developed machine learning models on our platform. The platform should also accommodate the various components of a machine learning system as mentioned in the above figure.

Following are the important features of the developed platform:

- Easy model integration with the platform

- Stable model execution

- ML Model performance dashboard

- Complete monitoring of the platform components

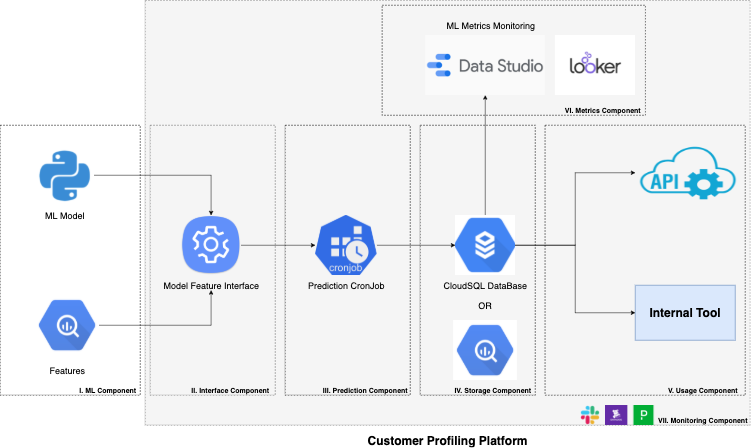

The following Fig 2 describes the System Architecture of the Developed Customer Profiling Platform, covering important features discussed above. The shaded area belongs to the components of the Profiling Platform.

Customer Profiling Platform

As shown in Fig 2. the customer profiling platform consists of the following key components:

- Interface Component

- Prediction Component

- Storage Component

- Usage Component

- Metrics Component

- Monitoring Component

The ownership of the first component, ML Component belongs to the team using our platform. The following subsections describe the components in detail.

ML Component

The Machine Learning component (or ML Component) is to be developed and owned by the teams (or clients) using our platform. The ML model based on the use cases is developed by individual teams using either Jupyter Notebook, Kubeflow, etc. The trained models are then to be stored in the GCS Bucket [2] and shared with the platform to access the models. Along with the model file, model features are also shared with the platform in the form of a SQL file to fetch related model features from Big Query [3].

Interface Component

The Interface Component is the most important part of the platform. Its main role is to serve as a general interface to integrate new models into our platform efficiently. Different ML models need different environments for execution, this component handles this requirement. A detailed discussion on Interface Components is present in the later section.

Prediction Component

The platform uses scheduled Kubernetes Cronjob [4] to make batch predictions. The interface component makes sure that the ML model is integrated with the platform and is ready for making inferences. We are currently primarily aiming at making batch predictions on our platform, which would be later extended to online predictions. The client using our platform provides the prediction schedule and we develop a Cronjob for model inference.

Storage Component

The model predictions made from the prediction component are then stored in the required format through Storage Component. A persistent database is coupled with the platform to store prediction results. For our platform, we use Google CloudSQL [5] as persistent storage. The schema of the storage tables is finalized as per the requirements of the clients. One of the limitations of persistent storage is that we can’t use the data stored for analytics purposes. Thus the tables present in the CloudSQL DB are mirrored to the data warehouse, BigQuery [3] through the Dataflow pipeline developed internally by Mercari Engineers.

Usage Component

The Usage Component aims to provide predictions generated by the integrated ML models to the final use cases; e.x. campaigns, other microservices, etc. Serving the batch prediction results to final use cases is carried through the following tools supported by our platform:

- Dedicated APIs developed in GoLang to serve prediction results to other microservices.

- Integrate predictions to Internal Tool as attributes which are then to be used by teams designing campaigns for coupon distribution.

Metrics Component

The Metrics Component is developed for ML Performance Monitoring. After releasing the ML model to production, we need to monitor the performance on ML Metrics like accuracy, precision, recall, etc. For this, we integrated Google DataStudio [6] dashboards into our platform to monitor ML performance. The integrated dashboards use the mirrored BigQuery from the Storage Component to fetch prediction results and then compute and display the desired metrics. We are also planning to integrate Looker [7] dashboards with our platform to monitor other Business KPIs related to the integrated model.

Monitoring Component

The Monitoring Component performs system maintenance monitoring of the Prediction Component, Storage Component, and Usage Component. Each component is monitored through different tools:

- DataDog [8]: Used to monitor Prediction cron jobs, CloudSQL DB and APIs.

- PagerDuty [9]: Integrated with DataDog monitors where critical monitoring is required; e.x. CloudSQL DB Resource Utilization, Error rates in APIs, etc.

- Slack: DataDog monitors are integrated with Mercari Slack channels.

Developing Interface Component

This section discusses the implementation of the Interface Component in detail. One of the key challenges in creating a centralized platform to run ML models is to create a general adaptive environment that can cater to different ML model requirements.

Some of the giant cloud service vendors provide similar services, AI Platform Prediction [10] of Vertex AI in GCP and Deploy Models for Inference [11] of SageMaker in AWS. Our direction in developing the Interface Component is inspired by the Custom Prediction Routines [12] developed by Google.

The Interface component will consist of three major CONFIGs that need to be updated by the client using our platform; MODEL_CONFIG, FEATURE_CONFIG, PREDICTION_CONFIG. Along with the config files, the client will also provide the necessary python code in a defined format for model preprocessing and prediction. The platform will then package the model serving environment as a python package which is to be imported while making predictions by the Prediction Component.

MODEL_CONFIG

The model config would consist of the details of the ML Model to be integrated. The information needs to be filled up by the clients using our platform in the below manner.

- Model Path: GCS Path to the model file, we currently plan to fetch the model through GCS Path

- Framework: Enum value, if the trained model uses custom model objects or not (Options: CUSTOM, BASE)

| # Configuration file for MODEL_CONFIG MODEL_PATH = “gs://{BUCKET_NAME}/path/to/model.pkl” FRAMEWORK = CUSTOM |

FEATURE_CONFIG

The feature config file would consist of the details of features to be used with the provided ML model. Along with the feature config, the client also needs to provide the SQL file to fetch features from BigQuery and preprocessing files.

- Feature SQL Path: Path to SQL file present in the repository

- Preprocess Object Path: The preprocess object (preprocess.pkl) could be shared using GCS Path if exists, else the empty string

| # Configuration file for FEATURE_CONFIG FEATURE_SQL_PATH = “features.sql” PREPROCESS_OBJECT_PATH = “gs://{BUCKET_NAME}/path/to/service/preprocess.pkl” |

PREDICTION_CONFIG

The prediction config file would consist of the details of the predictions being made by the model. Along with prediction config, the client also needs to provide a prediction file containing post-processing of the predictions.

- Service Name: Name of the service donating the use case of the model

- Version: Service version to be used while compiling in setup.py

- Prediction Schedule: CronJob prediction schedule

- Prediction Names: Names of columns storing the prediction information

- Prediction Names Datatype: Datatype of the above prediction names

| # Configuration file for PREDICTION_CONFIG SERVICE_NAME = “gender” VERSION = “0.0.1″ PREDICTION_SCHEDULE = “00 18 * * *”

|

Custom Prediction Routine

We need to set up the environment for model prediction. For this, we plan to package the required python files: preprocess.py, model.py, and predictor.py along with requirements into a single package.

The provided preprocess.py and predictor.py should follow the definition mentioned in the Custom Prediction Routine [12]. The python file model.py needs to be added if the custom ML model is used for training. The class implementation of the model is required to load the model pickle file.

The platform contains setup.py to package the python files provided by our clients. E.x.:

from setuptools import setup setup( |

Deliverables By Our Client

To summarize the deliverables, the client to the platform requires to provide the following files:

- config.yaml

- MODEL_CONFIG: Configuration for Models

- FEATURE_CONFIG: Configuration for Features

- PREDICTION_CONFIG: Configuration for Predictions

- model.py : Class implementation of the model

- features.sql : SQL file to fetch features from BigQuery

- preprocess.py : Class implementation of preprocessing

- predictor.py : Class implementation of predictor along with post-process

- requirements.txt : Path to python packages for running the above-provided files

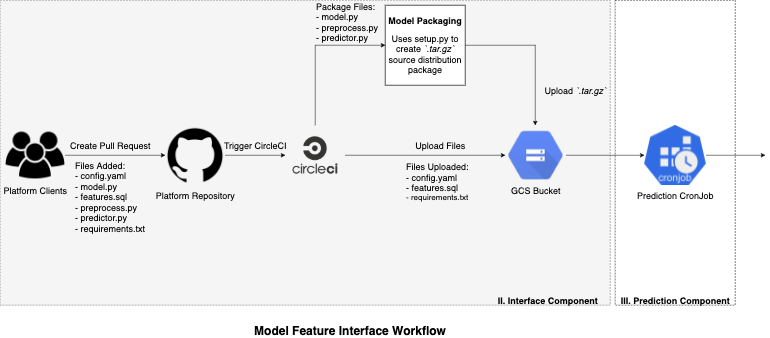

The above deliverables are provided to the platform GitHub repository by our clients. The platform repository contains CircleCI integration to execute model packaging with the required environment.

Workflow

The below Fig 3 describes the workflow of the Model Feature Interface.

The uploaded model artifacts (.tar.gz), config, requirements, and feature files are used by the Prediction Component to make model predictions. The model artifacts are installed as a python package in the Prediction Component and the main controller function of the prediction cronjob imports the package to call the predictor class (from predictor.py) for making predictions. The environment required to run the model is present in requirements.txt, which is set up before executing the main controller function.

Conclusion

The developed general customer profiling platform currently supports batch predictions. In the future, we would be adding the feature of online predictions to our platform. We are currently onboarding different customer profiling models developed by teams across Mercari.

Thank you for taking the time to read this article.