Introduction

Hi, my name is Takuma. I am a software and machine learning engineer at Mercari.

Artificial Intelligence (AI) is a buzzword nowadays. We also often see terms, such as ‘Deep Learning‘ and ‘Deep Neural Networks‘ that are subsets of AI and machine learning. I would like to share our experiment on image classification using deep learning.

Neural Networks Winter

Deep learning is a variation of neural networks techniques. In the 7th International Conference on Document Analysis and Recognition (ICDAR 2003), held in Edinburgh, UK, Simard et al. (Microsoft Research) said in their paper ‘Best Practices for Convolutional Neural Networks Applied to Visual Document Analysis‘ that:

After being extremely popular in the early 1990s, neural networks have fallen out of favor in research in the last 5 years. In 2000, it was even pointed out by the organizers of the Neural Information Processing System (NIPS) conference that the term “neural networks” in the submission title was negatively correlated with acceptance.

(I actually attended the conference as a student and I made a presentation on digits detection and recognition.)

At that time, many researchers were being attracted to other algorithms like Support Vector Machine (SVM) and so on.

Why Deep Learning Again?

Some researchers, such as, Yann LeCun, Geoff Hinton, Yoshua Bengio and Andrew Ng, continued to study neural networks. Thanks to their achievements, algorithms based on deep learning have achieved better results than others on many tasks and competitions, such as ILSVRC2012. I like their talk about their struggles in the winter: Deep Learning Gurus Talk about History and Future of Machine Learning.

After their achievements, many researchers started using deep learning techniques again.

Deep learning algorithm breakthroughs, coupled with the latest hardware improvements, made it more practical, since large amounts of data and huge computational resources are required for deep learning.

I like the TED talk by Fei-Fei Li (director of Stanford’s Artificial Intelligence Lab and Vision Lab) and her explanation as to why large amounts of data are needed for AI.

(I visited Stanford University last year, but I couldn’t see her unfortunately…)

Image Classification

One of the practical applications of deep learning is image classification / object recognition.

We prepared an image data set from Mercari that contains 1 million item images from about 1,000 categories (1,000 images for each category).

We used 90% of the images for training and the other for evaluation.

Sample Images

Training

We conducted our image classification experiment using TensorFlow. TensorFlow is Google’s open source machine learning library. It’s not just for neural networks.

We used the Inception-v3 model. It is a powerful image classification algorithm based on deep neural networks. In order to train the model from scratch, TFRecord format data is needed. A script for converting images to TFRecord format data is included in the repository.

ImageNet is a common academic image data set for image classification. The data set is described in the TED talk above. Also the Inception-v3 model uses the data set as a training example.

Although we didn’t depend on ImageNet, we used imagenet_train.py as described in README. This is also available for any image data set without the need to make any changes as long as the number of categories is less than or equal to 1,000 and each category has around 1,000 images.

Even if your data has more categories, you just have to change the number of categories and images for training in imagenet_data.py

Then, you can run the training script.

# Build the model. Note that we need to make sure the TensorFlow is ready to # use before this as this command will not build TensorFlow. bazel build inception/imagenet_train # run it bazel-bin/inception/imagenet_train --num_gpus=1 --batch_size=32 --train_dir=/tmp/imagenet_train --data_dir=/tmp/imagenet_data

Environment & Parameters

GPUs have been more commonly used for machine learning in recent years.

It is possible to train the model without GPUs. However, it may take several or more months to obtain practical results.

Even when using a single GPU, due to GPU memory limitations, the batch size for training the Inception-v3 model should be less than or equal to 32 in our environment (AWS EC2 p2.xlarge) that has a single TESLA K80 GPU. In general, a larger batch size leads to better results.

One of the comments in inception_train.py said:

# With 8 Tesla K40's and a batch size = 256, the following setup achieves # precision@1 = 73.5% after 100 hours and 100K steps (20 epochs).

Following the comment, we used p2.8xlarge that has 8 TESLA K80 GPUs and set the batch size to 256.

bazel-bin/inception/imagenet_train --num_gpus=8 --batch_size=256 --train_dir=/tmp/imagenet_train --data_dir=/tmp/imagenet_data

Monitoring

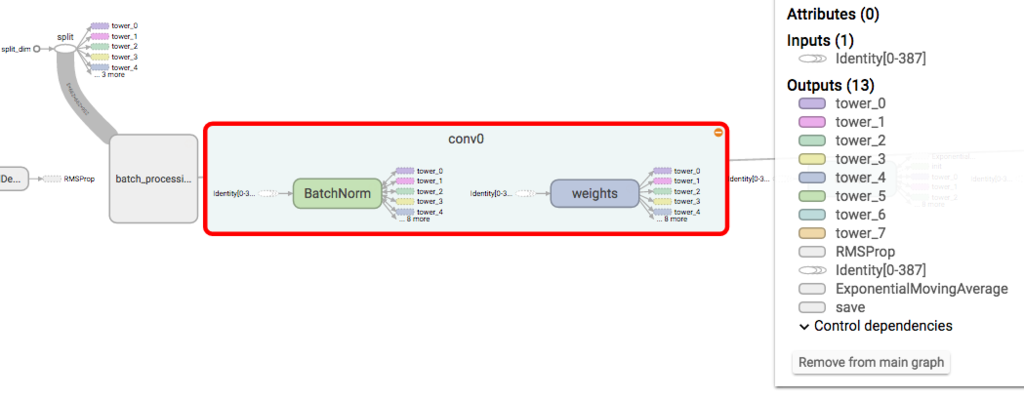

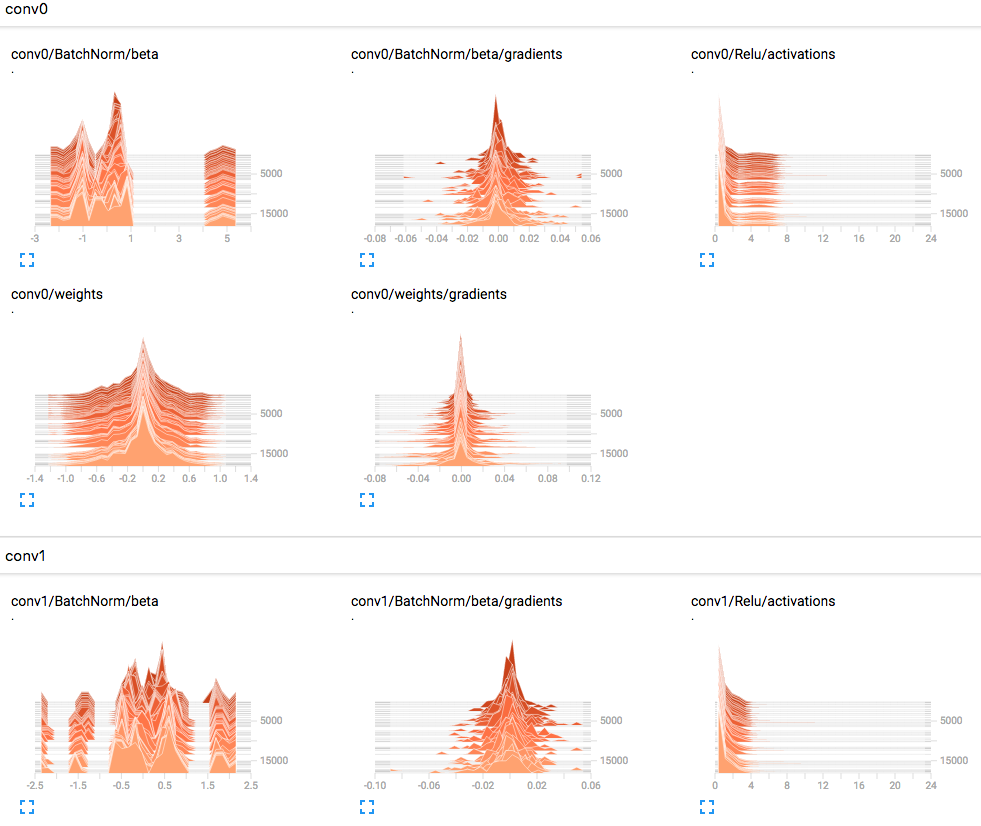

One of the features of TensorFlow is TensorBoard. We can monitor and check the training status and models through the web browser.

A Part of the Model

Some of Metrics

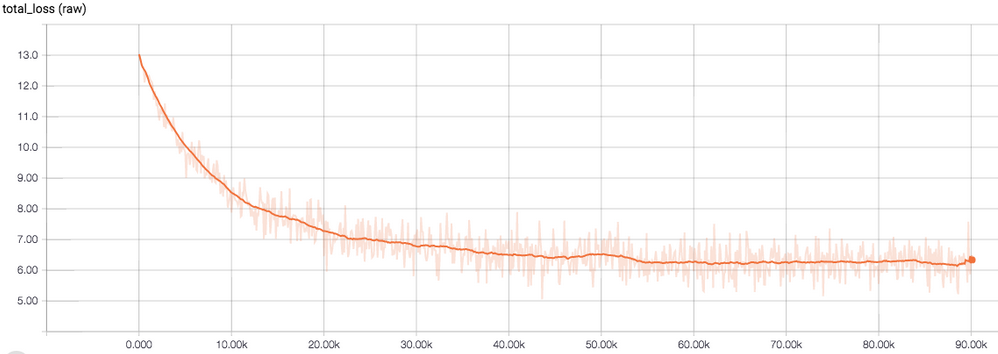

Training Loss

We stopped the training at 90K steps.

If we kept the training, the training loss would be improved little by little.

And also better results would be obtained, however this is time consuming.

... 2016-12-21 07:40:47.901352: step 89910, loss = 5.92 (141.0 examples/sec; 1.816 sec/batch) 2016-12-21 07:41:06.331693: step 89920, loss = 5.59 (144.3 examples/sec; 1.774 sec/batch) 2016-12-21 07:41:25.166112: step 89930, loss = 6.59 (109.3 examples/sec; 2.341 sec/batch) 2016-12-21 07:41:43.155784: step 89940, loss = 5.45 (147.1 examples/sec; 1.740 sec/batch) 2016-12-21 07:42:01.680773: step 89950, loss = 6.84 (145.6 examples/sec; 1.759 sec/batch) 2016-12-21 07:42:20.002877: step 89960, loss = 6.98 (144.5 examples/sec; 1.772 sec/batch) 2016-12-21 07:42:38.857091: step 89970, loss = 6.56 (142.1 examples/sec; 1.801 sec/batch) 2016-12-21 07:42:56.732429: step 89980, loss = 6.04 (142.7 examples/sec; 1.794 sec/batch) 2016-12-21 07:43:14.753710: step 89990, loss = 6.13 (142.8 examples/sec; 1.793 sec/batch) 2016-12-21 07:43:33.722591: step 90000, loss = 6.48 (148.0 examples/sec; 1.729 sec/batch)

Since it took around 2 days for 90K steps with K80s and 100 hours for 100K steps with K40s, K80 may be 2x faster than K40.

Evaluation

# Build the model. Note that we need to make sure the TensorFlow is ready to # use before this as this command will not build TensorFlow. bazel build inception/imagenet_eval # run it bazel-bin/inception/imagenet_eval --checkpoint_dir=/tmp/imagenet_train --eval_dir=/tmp/imagenet_eval

Then, we got…

precision @ 1 = 0.4332 recall @ 5 = 0.7033

Discussion

The accuracy was lower than we had expected…

Here are some possible reasons for why we got such a result.

Some categories are very similar, such as, ‘Men > Shoes > Sneakers’ and ‘Women > Shoes > Sneakers’. Also some varieties of clothes for men and women shared similarities.

Moreover, some categories such as “Tickets” cannot be recognized without OCR (Optical Character Recognition). This is needed, for example to classify tickets for events featuring Japanese artists, foreign artists, as well as things like bus and train tickets etc…

Sample Results

In the tensorflow/models/inception, scripts are used for batch training and evaluation.

When we want to use a trained model for non-batch image classification tasks, we need to write about 20 lines of code.

import tensorflow as tf from inception import inception_model as inception from inception import image_processing FLAGS = tf.app.flags.FLAGS image = image_processing.image_preprocessing(tf.read_file('/path/to/jpg'), bbox=[], train=False) image = tf.reshape(tf.cast(image, tf.float32), shape=[1, FLAGS.image_size, FLAGS.image_size, 3]) logits, _ = inception.inference(image, num_classes=1001) scores = tf.nn.softmax(logits) top_k = tf.nn.top_k(scores, k=5) variable_averages = tf.train.ExponentialMovingAverage(inception.MOVING_AVERAGE_DECAY) variables_to_restore = variable_averages.variables_to_restore() saver = tf.train.Saver(variables_to_restore) with tf.Session() as sess: init = tf.initialize_all_variables() sess.run(init) ckpt = tf.train.get_checkpoint_state('/path/to/train/dir') saver.restore(sess, ckpt.model_checkpoint_path) top_k_values, top_k_indices = sess.run(top_k) print('Label IDs: ', top_k_indices) print('Scores: ', top_k_values)

Classification score is not treated in the imagenet_eval.

Since it would be helpful to know the confidence of the classifications, we added this line of code scores = tf.nn.softmax(logits).

This is actually calculated in the inception model, but it is not returned.

After this, we get the classification results for the image.

('Label IDss: ', array([[ 64, 202, 206, 292, 600]], dtype=int32)) ('Scores: ', array([[ 0.57124287, 0.37565342, 0.00791241, 0.0067259 , 0.00576101]], dtype=float32))

We applied this trained model to some images.

| image | score – category |

|---|---|

|

0.310 – Women > Shoes > Sneakers 0.296 – Men > Shoes > Sneakers 0.067 – Sports > Other Sports > Basketball 0.045 – Sports > Other Sports > Athletics 0.041 – Babies & Kids > Shoes > Sneakers |

|

0.173 – Electronics > Audio Equipments > Earphones 0.124 – Electronics > TV/Video Equipments > Cables 0.113 – Electronics > Audio Equipments > Cables 0.098 – Hobbies > Video Games > Game Consoles 0.072 – Electronics > Beauty & Health > Hair Irons |

|

0.585 – Hobbies > Toys & Stuffies > Stuffies 0.107 – Babies & Kids > Toys > Music Boxes 0.095 – Babies & Kids > Toys > Rattles 0.028 – Women > Accessories > Key Rings 0.019 – Hobbies > Toys & Stuffies > Character Goods |

|

0.663 – Others > Groceries > Fruits 0.300 – Others > Groceries > Vegetables 0.005 – Home > Annual Events > Gifts 0.002 – Others > Groceries > Processed Foods 0.002 – Others > Groceries > Others |

|

0.665 – Cars & Motorcycles > Cars > Cars (non-Japanese) 0.192 – Cars & Motorcycles > Cars > Cars (Japanese) 0.055 – Cars & Motorcycles > Cars > Catalogs 0.008 – Cars & Motorcycles > Motorcycles > Motorcycles 0.006 – Cars & Motorcycles > Cars > Car Parts (Japanese) |

It looks practical!

Conclusion

I shared our experiment results of image classification with deep learning.

Thanks to OSS such as TensorFlow, we don’t need to write code, and also, knowledge of machine learning is not always required for a simple image classification task.

All we need are large amounts of labeled data and huge computational resources for image classification tasks nowadays.

Not only image classification, but also other machine learning applications like speech recognition, natural language processing and so on, are being improved dramatically by deep learning approaches.

However, deep learning still has some problems, e.g. huge computation time and training difficulties.

Currently, I am interested in model compression of deep neural networks, since deep models usually have a huge number of parameters.

If it could be represented by less parameters, deep learning would be more useful.

I hope we will be able to bring better user experience through machine learning.